12 game-changing moments in the history of artificial intelligence (AI)

From Alan Turing's seminal paper to the advent of ChatGPT, here are 12 pivotal moments in the history of artificial intelligence.

- 1950 — Alan Turing's seminal AI paper

- 1956 — The Dartmouth workshop

- 1966 — First AI chatbot

- 1974-1980 — First "AI winter"

- 1980 — Flurry of "expert systems"

- 1986 — Foundations of deep learning

- 1987-1993 — Second AI winter

- 1997 — Deep Blue's defeat of Garry Kasparov

- 2012 — AlexNet ushers in the deep learning era

- 2016 — AlphaGo's defeat of Lee Sedol

- 2017 — Invention of the transformer architecture

- 2022 – Launch of ChatGPT

Artificial intelligence (AI) has forced its way into the public consciousness thanks to the advent of powerful new AI chatbots and image generators. But the field has a long history stretching back to the dawn of computing. Given how fundamental AI could be in changing how we live in the coming years, understanding the roots of this fast-developing field is crucial. Here are 12 of the most important milestones in the history of AI.

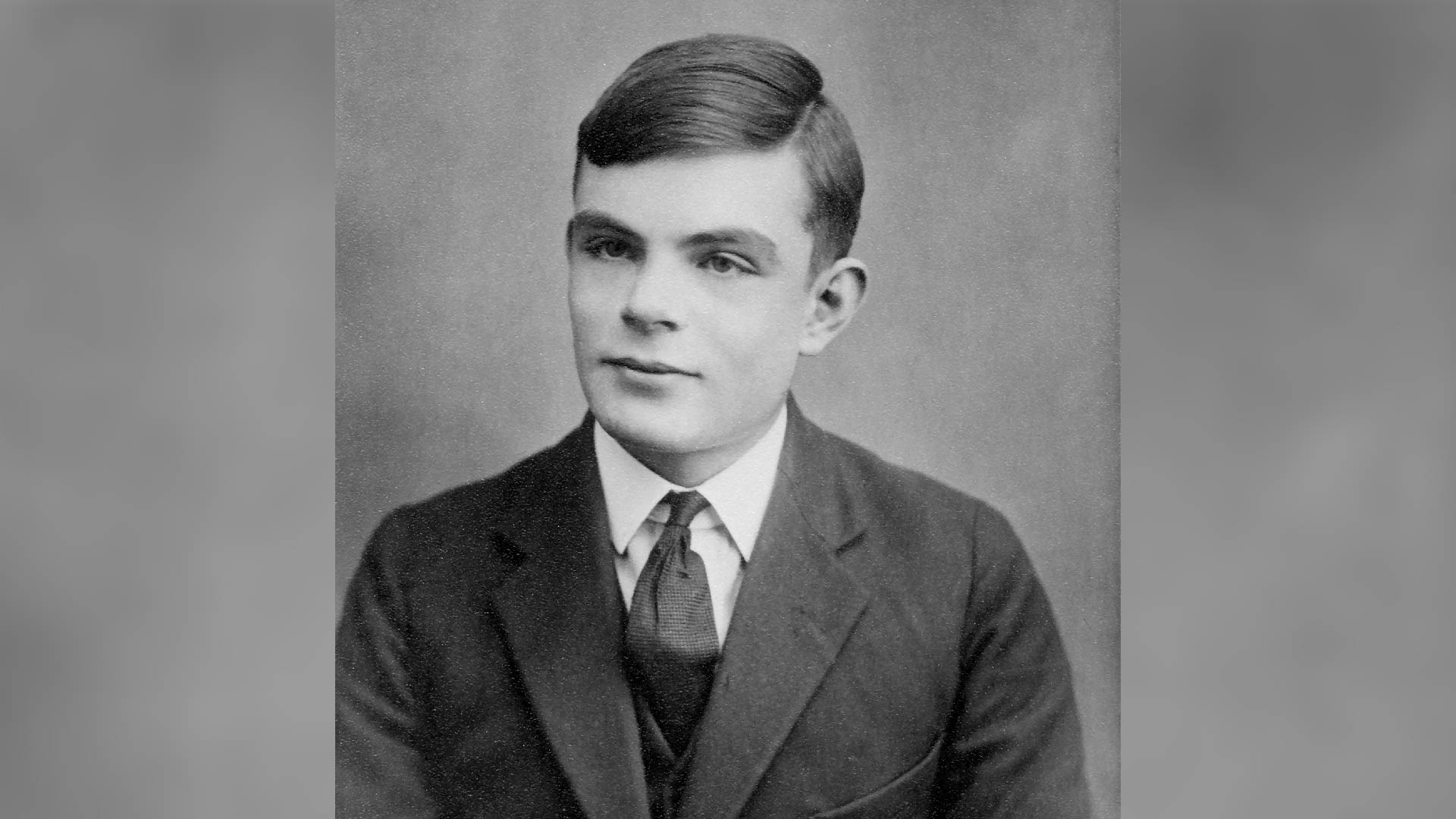

1950 — Alan Turing's seminal AI paper

Renowned British computer scientist Alan Turing published a paper titled "Computing Machinery and Intelligence," which was one of the first detailed investigations of the question "Can machines think?".

Answering this question requires you to first tackle the challenge of defining "machine" and "think." So, instead, he proposed a game: An observer would watch a conversation between a machine and a human and try to determine which was which. If they couldn't do so reliably, the machine would win the game. While this didn't prove a machine was "thinking," the Turing Test — as it came to be known — has been an important yardstick for AI progress ever since.

Article continues below1956 — The Dartmouth workshop

AI as a scientific discipline can trace its roots back to the Dartmouth Summer Research Project on Artificial Intelligence, held at Dartmouth College in 1956. The participants were a who's who of influential computer scientists, including John McCarthy, Marvin Minsky and Claude Shannon. This was the first time the term "artificial intelligence" was used as the group spent almost two months discussing how machines might simulate learning and intelligence. The meeting kick-started serious research on AI and laid the groundwork for many of the breakthroughs that came in the following decades.

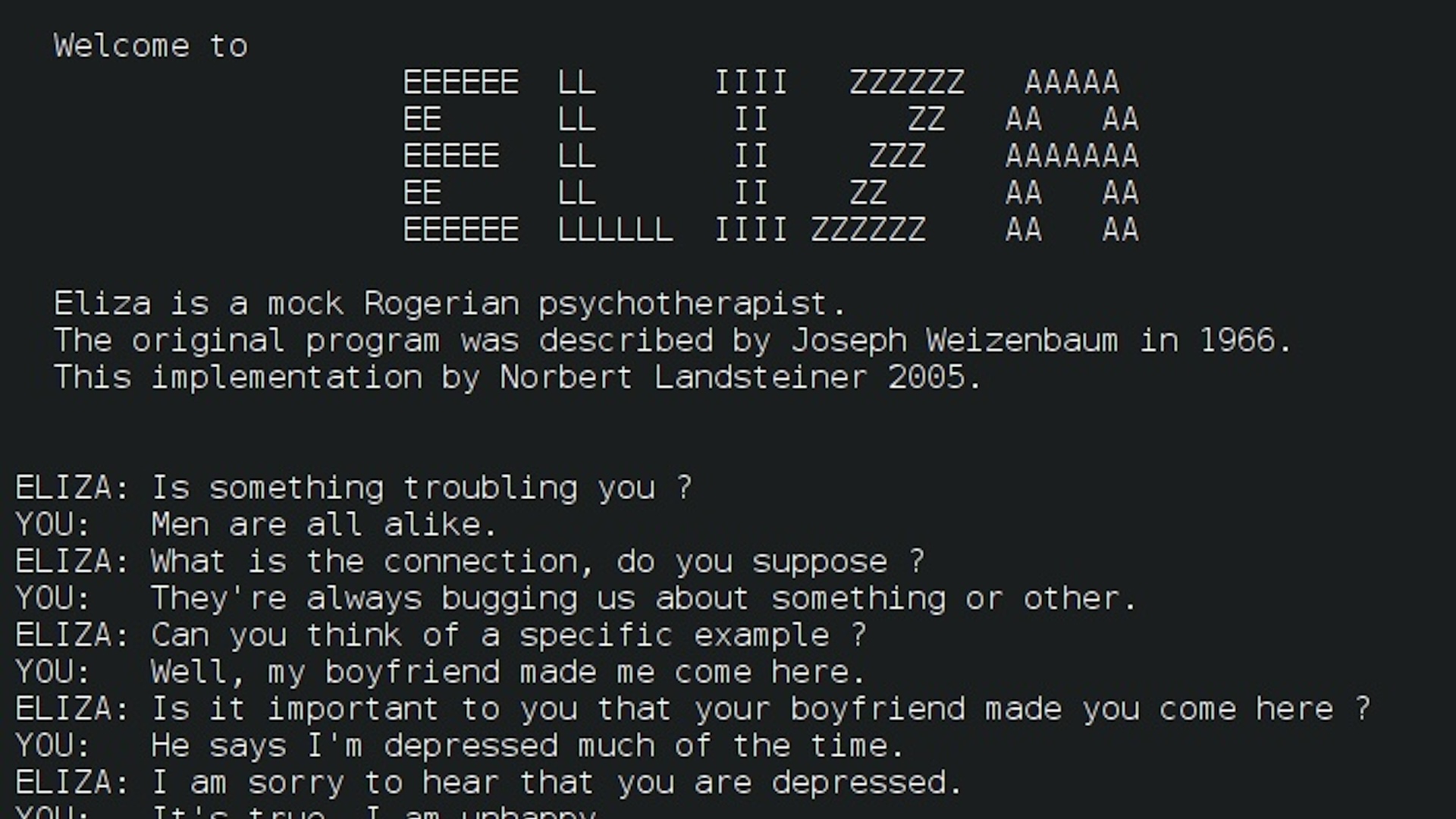

1966 — First AI chatbot

MIT researcher Joseph Weizenbaum unveiled the first-ever AI chatbot, known as ELIZA. The underlying software was rudimentary and regurgitated canned responses based on the keywords it detected in the prompt. Nonetheless, when Weizenbaum programmed ELIZA to act as a psychotherapist, people were reportedly amazed at how convincing the conversations were. The work stimulated growing interest in natural language processing, including from the U.S. Defense Advanced Research Projects Agency (DARPA), which provided considerable funding for early AI research.

1974-1980 — First "AI winter"

It didn't take long before early enthusiasm for AI began to fade. The 1950s and 1960s had been a fertile time for the field, but in their enthusiasm, leading experts made bold claims about what machines would be capable of doing in the near future. The technology's failure to live up to those expectations led to growing discontent. A highly critical report on the field by British mathematician James Lighthill led the U.K. government to cut almost all funding for AI research. DARPA also drastically cut back funding around this time, leading to what would become known as the first "AI winter."

1980 — Flurry of "expert systems"

Despite disillusionment with AI in many quarters, research continued — and by the start of the 1980s, the technology was starting to catch the eye of the private sector. In 1980, researchers at Carnegie Mellon University built an AI system called R1 for the Digital Equipment Corporation. The program was an "expert system" — an approach to AI that researchers had been experimenting with since the 1960s. These systems used logical rules to reason through large databases of specialist knowledge. The program saved the company millions of dollars a year and kicked off a boom in industry deployments of expert systems.

Get the world’s most fascinating discoveries delivered straight to your inbox.

1986 — Foundations of deep learning

Most research thus far had focused on "symbolic" AI, which relied on handcrafted logic and knowledge databases. But since the birth of the field, there was also a rival stream of research into "connectionist" approaches that were inspired by the brain. This had continued quietly in the background and finally came to light in the 1980s. Rather than programming systems by hand, these techniques involved coaxing "artificial neural networks" to learn rules by training on data. In theory, this would lead to more flexible AI not constrained by the maker's preconceptions, but training neural networks proved challenging. In 1986, Geoffrey Hinton, who would later be dubbed one of the "godfathers of deep learning," published a paper popularizing "backpropagation" — the training technique underpinning most AI systems today.

1987-1993 — Second AI winter

Following their experiences in the 1970s, Minsky and fellow AI researcher Roger Schank warned that AI hype had reached unsustainable levels and the field was in danger of another retraction. They coined the term "AI winter" in a panel discussion at the 1984 meeting of the Association for the Advancement of Artificial Intelligence. Their warning proved prescient, and by the late 1980s, the limitations of expert systems and their specialized AI hardware had started to become apparent. Industry spending on AI reduced dramatically, and most fledgling AI companies went bust.

1997 — Deep Blue's defeat of Garry Kasparov

Despite repeated booms and busts, AI research made steady progress during the 1990s largely out of the public eye. That changed in 1997, when Deep Blue — an expert system built by IBM — beat chess world champion Garry Kasparov in a six-game series. Aptitude in the complex game had long been seen by AI researchers as a key marker of progress. Defeating the world's best human player, therefore, was seen as a major milestone and made headlines around the world.

2012 — AlexNet ushers in the deep learning era

Despite a rich body of academic work, neural networks were seen as impractical for real-world applications. To be useful, they needed to have many layers of neurons, but implementing large networks on conventional computer hardware was prohibitively inefficient. In 2012, Alex Krizhevsky, a doctoral student of Hinton, won the ImageNet computer vision competition by a large margin with a deep-learning model called AlexNet. The secret was to use specialized chips called graphics processing units (GPUs) that could efficiently run much deeper networks. This set the stage for the deep-learning revolution that has powered most AI advances ever since.

2016 — AlphaGo's defeat of Lee Sedol

While AI had already left chess in its rearview mirror, the much more complex Chinese board game Go had remained a challenge. But in 2016, Google DeepMind's AlphaGo beat Lee Sedol, one of the world's greatest Go players, over a five-game series. Experts had assumed such a feat was still years away, so the result led to growing excitement around AI's progress. This was partly due to the general-purpose nature of the algorithms underlying AlphaGo, which relied on an approach called "reinforcement learning." In this technique,AI systems effectively learn through trial and error. DeepMind later extended and improved the approach to create AlphaZero, which can teach itself to play a wide variety of games.

2017 — Invention of the transformer architecture

Despite significant progress in computer vision and game playing, deep learning was making slower progress with language tasks. Then, in 2017, Google researchers published a novel neural network architecture called a "transformer," which could ingest vast amounts of data and make connections between distant data points. This proved particularly useful for the complex task of language modeling and made it possible to create AIs that could simultaneously tackle a variety of tasks, such as translation, text generation and document summarization. All of today's leading AI models rely on this architecture, including image generators like OpenAI's DALL-E, as well as Google DeepMind's revolutionary protein folding model AlphaFold 2.

2022 – Launch of ChatGPT

On Nov. 30, 2022, OpenAI released a chatbot powered by its GPT-3 large language model. Known as "ChatGPT," the tool became a worldwide sensation, garnering more than a million users in less than a week and 100 million by the following month. It was the first time members of the public could interact with the latest AI models — and most were blown away. The service is credited with starting an AI boom that has seen billions of dollars invested in the field and spawned numerous copycats from big tech companies and startups. It has also led to growing unease about the pace of AI progress, prompting an open letter from prominent tech leaders calling for a pause in AI research to allow time to assess the implications of the technology.