Recipe for a Replicant: 5 Steps to Building a Blade Runner-Style Android

Building a replicant

Blade Runner 2049 hits theaters on Friday, Oct. 6. The sci-fi thriller will serve as a distant sequel to the original "Blade Runner" film from 1982, in which a faction of advanced humanoid robots become murderous in their quest to increase their artificially-shortened life spans.

The robots, called replicants, are nearly indistinguishable from humans in every way except for their emotions. They're so similar that it takes special police officers called Blade Runners, played by Harrison Ford and Ryan Gosling, to administer a fictional Voight-Kampff test — not unlike a lie detector test for emotional responses — in order to tell them apart from real humans.

As real-world robotics becomes more and more advanced by the day, one might wonder how far off we really are from creating truly life-like, autonomous replicants. In order to do so, we'll need to sort out a few key aspects of robotics and artificial intelligence. Here's what we'd need to build a Blade Runner-like replicant.

Article continues belowCreate a brain that can learn

The quest toward a true, generalized artificial intelligence that requires neither training nor supervision to learn about the world has thus far eluded scientists.

Most machine learning systems use either supervised or adversarial learning. In supervised learning, a human programmer provides the machine with thousands of examples to jumpstart its knowledge base. With adversarial learning, a computer trains itself against another computer or itself to optimize its own behavior. Adversarial learning is practical only for gaming — a chess-playing computer can play countless games against itself per minute but knows nothing else about the world.

The problem is that many researchers want to base artificial intelligence on the human brain, but basic knowledge of neuroscience progresses at a different rate than does our technological capabilities and ethical discussions over what it means to be intelligent, conscious and self-aware. Super-Intelligent Machines: 7 Robotic Futures]

Program emotion into artificial intelligence

The one way to tell a replicant from a human is that the machines have misplaced and inappropriate emotional reactions. That's good, because scientists are really bad at programming emotion into intelligent machines. But replicants still have some semblance of emotion, which makes them more advanced than today's machines.

Get the world’s most fascinating discoveries delivered straight to your inbox.

In order to teach emotional salience to robots, programmers need to use supervised learning just as they would to train image-detection software, according to Jizhong Xiao, the head of the robotics program at City College of New York. For example, a computer would need to face thousands of examples of a smile before it could detect and comprehend one on its own.

The machines would also need to comprehend emotional language. While some preliminary work has been done to teach context and proper language comprehension to computers by making an artificial intelligence agent read the entirety of Wikipedia, our AI isn't quite ready to take on the guise of a human as replicants do.

Make life-like skin that can heal

Live skin is not as easy to replicate as it sounds. While hydrogels can make plastics feel more like living tissue and the silicone that coats some modern robots may feel similar to real flesh, it still doesn't pass for actual tissue, especially given that it would have to last for a replicant's entire 4-year life span.

A robot that was put on display at a recent convention had to undergo expensive repairs after too many passers-by manhandled it. That's because even though artificial skins seem increasingly life-like, they don't possess skin's ability to self-repair. Rather, each tear and stretch will only compound over time. Some attempts to generate self-repairing plastics found early success, but they were only able to self-repair once.

The "Terminator" film series had a clever solution to the skin problem: Rather than being fully synthetic machines, the the terminators were described as simply robots encased in living tissues.

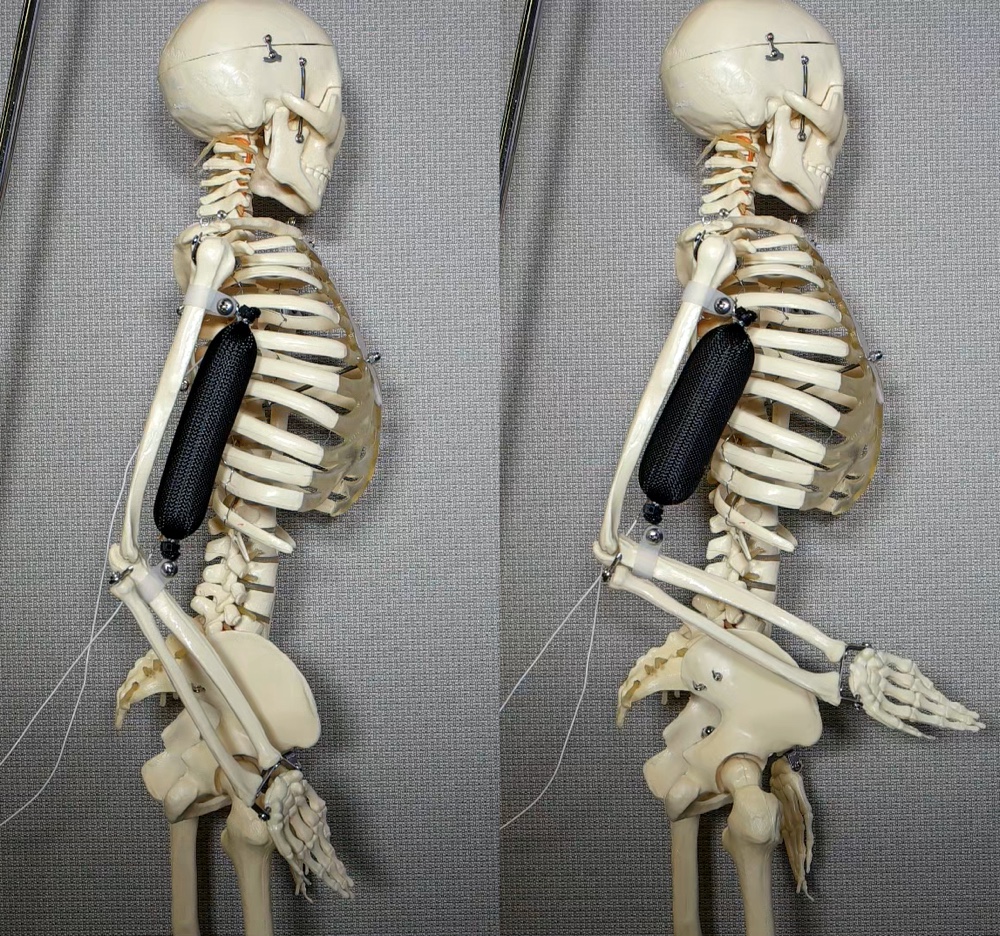

Craft soft, strong artificial muscles

There's no way around it — modern robots just look clunky. In order to build replicants with smooth, life-like motions, we're going to need to move beyond robots that can perform only simple, stiff movements.

To solve this, some teams are working on making soft, artificial "muscles" for robots and prosthetics that may help smooth things out a bit.

Zheng Chen, a mechanical engineer at the University of Houston recently received a grant to develop artificial muscles and tendons to make better prosthetics than those that are powered by conventional motors. And a team of engineers from Columbia University developed a soft, low-density synthetic muscle that can lift up to 1,000 times its own weight, according to research published online Sept. 19, 2017, in the journal Nature Communications.

While these muscles are still in the proof-of-concept phase, they could someday help improve and proliferate so-called soft machines.

Construct hands that can grasp like a human

Most people have little problem picking up an egg and carefully cracking it open over a bowl. But for a robot, this is a logistical nightmare.

Robots will need a whole host of capabilities to successfully interact with the physical world: image detection, knowledge of context and how objects work, tactile feedback so they can balance objects without squeezing too hard, and the ability to make small, gentle and careful motions.

Some robots, like Flobi from Germany's Bielefeld University or GelSight from MIT have achieved rudimentary success when it comes to finding objects, picking them up, and putting them back down; they can't do so quickly or smoothly enough to pass as human-like as a replicant can. And never mind being able to do so automously — these robots operate only under carefully constructed laboratory settings where the things they need to grab are sitting right in front of them.