How Evolution May Help Build Better Robots

Get the world’s most fascinating discoveries delivered straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

NEW YORK — In the real world, animals have evolved the ability to get from point A to B by galloping, crawling and jumping. Now, robots in the virtual world have accomplished something similar.

In new work, researchers have simulated evolution using virtual robots and watched them develop locomotion strategies of their own.

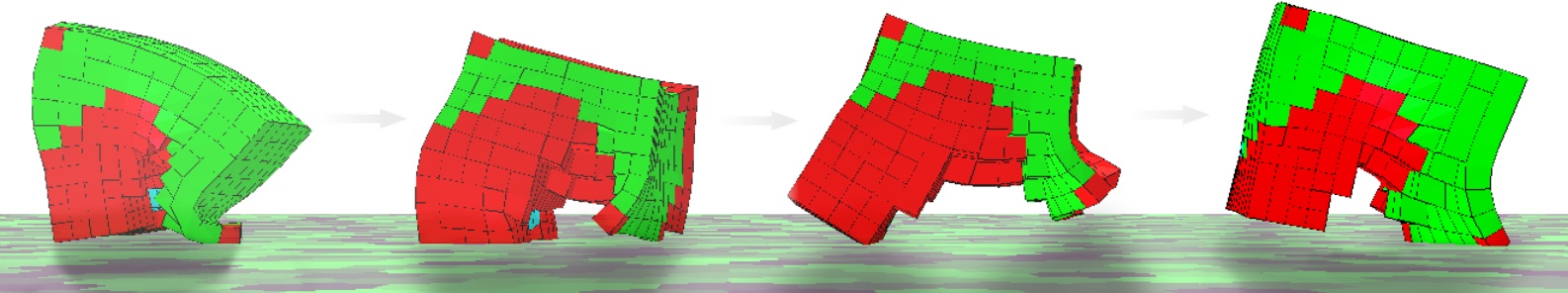

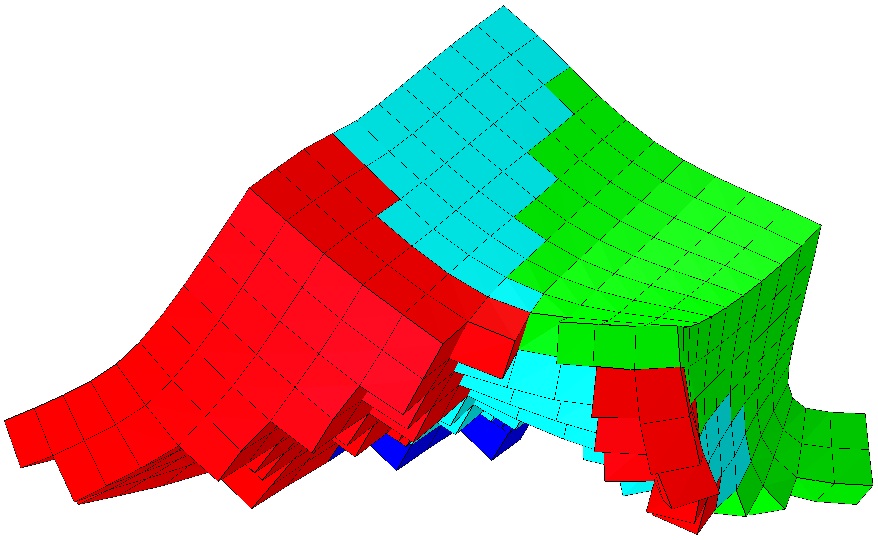

In robot-creating simulations, researchers started with random assortments of four types of tissues — including two kinds of muscle, soft support tissue and bone. The simulations favored the tissue configurations that traveled the fastest from point A to point B. Then the team allowed the mathematical simulation to run its course over 1,000 generations of robots.

Article continues below"We see really cool stuff as a result of that, without any interaction from me or anyone else, just this process unfolding itself," Nick Cheney, a member of the research team and a doctoral student at Cornell University, told an audience of reporters Tuesday (May 21) here in midtown Manhattan.

The team dubbed the categories of successful robot design that emerged as the L-Walker, the Incher, the Push-Pull, the Jitter, the Jumper and the Wings. [Super-Intelligent Machines: 7 Robotic Futures]

"I would never come up with anything that looks remotely like that," Cheney said, referring to one of these virtual robots. The bots consist of cubes known as voxels (three-dimensional pixels), which display bright colors signifying different types of tissue.

In these simulations, the virtual robots accomplished something highly unusual for robots: They adapted.

Get the world’s most fascinating discoveries delivered straight to your inbox.

Most robots currently in use in the real world are precisely engineered to work in highly constrained environments, such as manufacturing floors, with their every action hand designed and coded by engineers. As a result, these machines cannot adapt to unfamiliar surroundings.

Unlike human engineers, however, nature is a master at creating creatures that can adapt to and interact with their surroundings. This happens through natural selection, the process by which certain traits give organisms a better chance to survive and thus produce more offspring. Nature thus "selects" these traits to persist in future generations. Cheney and colleagues are striving for a similar process in robotics.

Although the creatures he and colleagues created do not currently exist in the real world, they could be created with 3D printing.

"The truth of the matter is we can print almost anything, any design," he said, noting researchers recently made an artificial ear with living cells using a 3D printer.

In creating the virtual, soft-bodied robots, the team intentionally avoided the traditional robotics' design approach, Cheney said.

"We wanted to be true to nature and introduce muscles and bones and tissues," he said.

Most of the random assortments of tissues that served as a starting point were "pretty bad," he said. "Every once in a while, you get lucky and one is slightly better. Those reproduce more … Over time, you get some pretty amazing things."

In real life, a molecule called DNA (deoxyribonucleic acid) encodes the instruction set to create a living organism; analogously, these virtual robots were created using what is known as a compositional pattern-producing network, or a network of mathematical functions, Cheney said.

Many of the strategies that emerged among the soft-bodied robots mimicked those of animals, such as a galloping horse or a crawling inchworm.

The research team included Cheney, colleagues Robert MacCurdy and Hod Lipson of Cornell's Creative Machines Lab, and Jeff Clune of University of Wyoming's Evolving AI Lab. The research is scheduled for presentation at the Genetic and Evolutionary Computation Conference in Amsterdam in July.

Follow us @livescience, Facebook & Google+. Original article on LiveScience.com.

Live Science Plus

Live Science Plus