Earthquake Forecasters Look Closer at Rock Friction

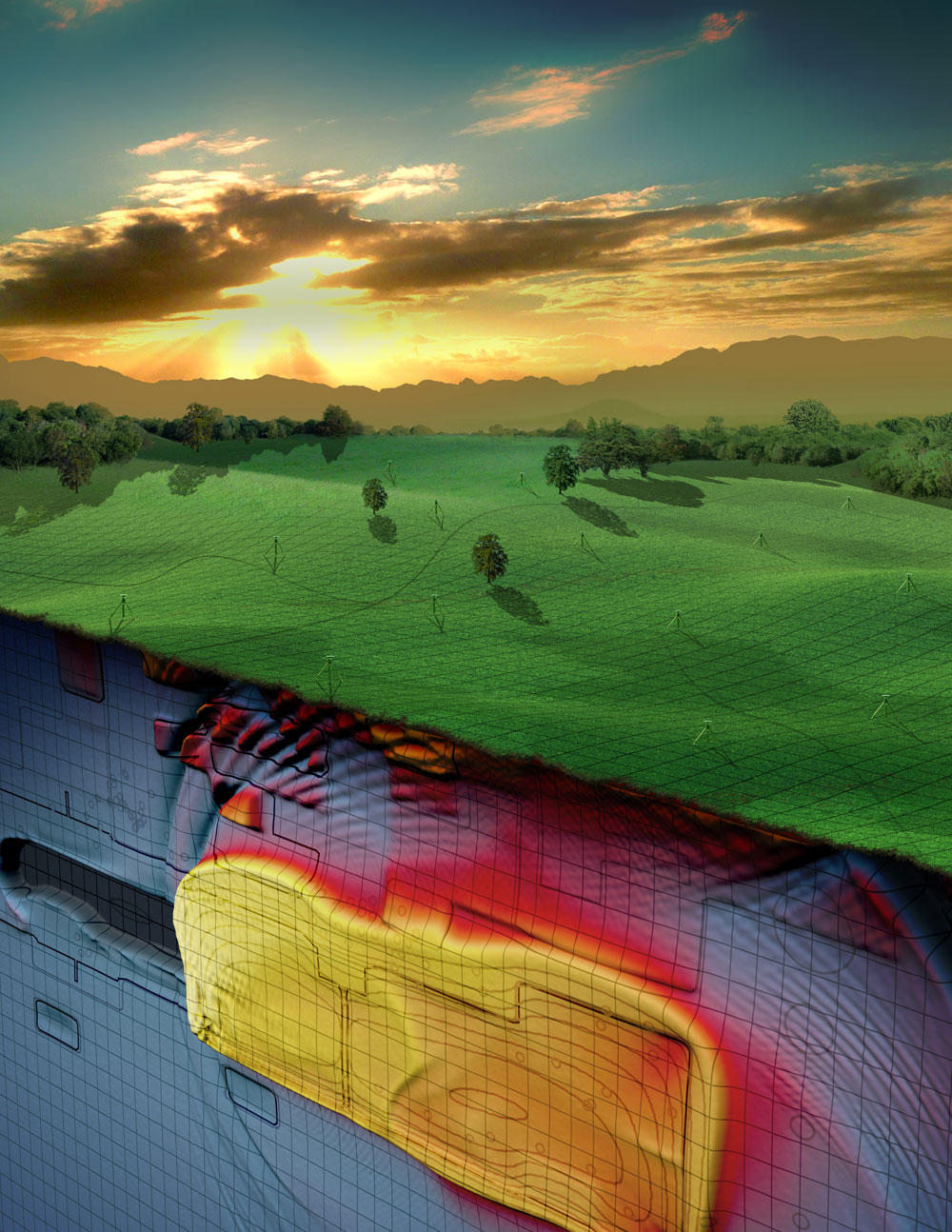

More-accurate forecasts of earthquakes may arise from a new computer model focusing on the physics of the rock in one quake-prone segment of the San Andreas fault, researchers say.

Although the basic physics of earthquakes have been known for a century, developing computer models of earthquake dynamics has been challenging. However, the amount of historical data available from the Parkfield segment of the San Andreas fault may prove helpful.

"A major limitation to earthquake prediction is that we do not as of today know the physics that explains the full spectrum of fault behavior," said researcher Sylvain Barbot, a geophysicist at the California Institute of Technology. "The difficulty of earthquake prediction is that they [quakes] occur during just a few seconds but repeat every hundreds of years, and that the details of what happened during these few seconds carry a lot of weight in how long it will take before the next one."

Article continues belowResearchers are now attempting to figure out what happens during those few seconds by analyzing the friction of rock. Their findings are detailed in the May 11 issue of the journal Science.

Fault friction

The likelihood of a quake is dictated by the physics of the friction between rocks and the forces exerted on them, similar to how rubbing your hands with and without gloves on requires different amounts of effort.

Barbot and his colleagues applied their rock physics strategy on the Parkfield area, some 200 miles (320 kilometers) northwest of Los Angeles. It has experienced a relatively predictable cycle of earthquakes for the past 150 years, seeing moderate-magnitude earthquakes every 20 years on average. That pattern led to the only official earthquake forecast in the United States: In 1985, scientists predicted that a magnitude 6 earthquake was likely to occur there before 1993. A magnitude 6 quake did occur there, but not until September 2004. The exact timing of quakes at Parkfield continues to elude researchers.

Get the world’s most fascinating discoveries delivered straight to your inbox.

Taking advantage of Parkfield's history of detailed measurements, the scientists constructed a physics-based model of the region.

"What's great about these earthquakes is that they don't kill anyone, and we can study them with the best available technology every time they happen," Barbot said. "If there is one place in the world where we could predict earthquakes, it would be at Parkfield."

Their model could explain the distribution of small earthquakes at Parkfield and how they related to the occurrence of large earthquakes. [13 Crazy Earthquake Facts]

"We can take physical laws describing how fault rocks behave in the lab and create models that reproduce a whole range of observations in a natural setting," Barbot told OurAmazingPlanet. "This implies that we are getting closer to understanding how earthquakes really work."

Not a prediction

Barbot said it would be dangerous to conclude from the researchers' results "that we may seem ready to predict earthquakes." Instead, this model "can readily be used to identify the area of the fault that needs to be better monitored to capture earthquake precursors, and test if they exist at all," he said.

This model could thus over time lay the groundwork for earthquake prediction. "Even if we're not ready for a full physics-based earthquake forecast, we're setting up the tools for this kind of analysis," Barbot said.

In the future, this strategy could analyze other faults as well. "In general, such models will be the most accurate in areas where we have a long and detailed history of past earthquakes, knowledge of the precise geometry of the fault at depth, and an idea of the spatial distribution of the friction on the plate interface," Barbot said.

A long-term goal of the researchers "is to integrate and study the interaction among neighboring faults or fault segments," Barbot said. "The Cholame segment of the San Andreas fault, in the Carrizo Plain, hosted a magnitude 7.9 earthquake in 1857, and there is a possibility that the Parkfield earthquakes, immediately to the north, may trigger an event of similar size."

Live Science Plus

Live Science Plus