Humans Have Caused the Most Dramatic Climate Change in 3 Million Years

The level of carbon dioxide in the atmosphere today is likely higher than it has been anytime in the past 3 million years. This rise in the level of carbon dioxide, a greenhouse gas, could bring temperatures not seen over that entire timespan, according to new research.

The study researchers used computer modeling to examine the changes in climate during the Quaternary period, which started around 2.59 million years ago and continues into today. Over that period, Earth has undergone a number of changes, but none so rapid as those seen today, said study author Matteo Willeit, a postdoctoral climate researcher at the Potsdam Institute for Climate Impact Research. [Photographic Proof of Climate Change: Time-Lapse Images of Retreating Glaciers]

"To get a climate warmer than the present, you basically have to go back to a different geological period," Willeit told Live Science.

Article continues below3 million years of climate

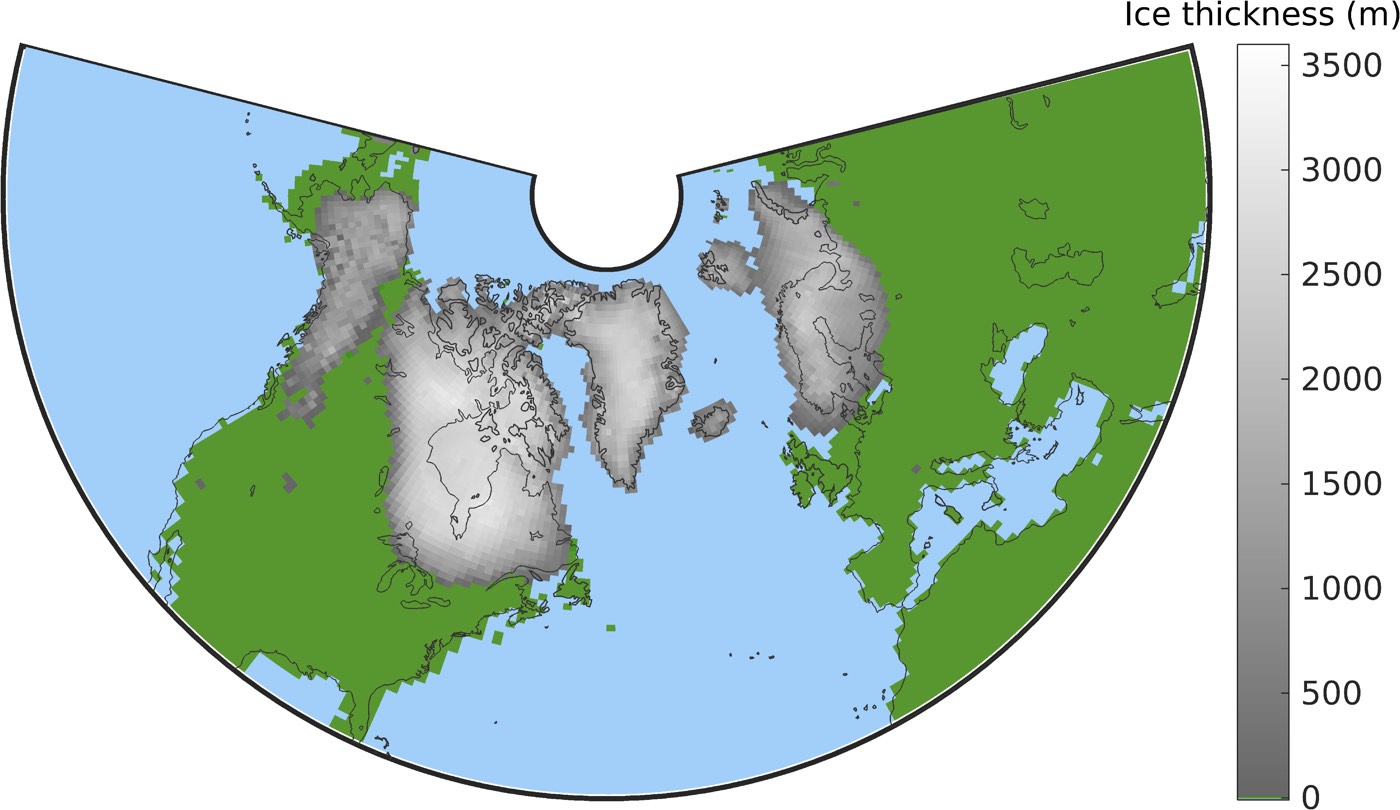

The Quaternary period began with a period of glaciation, when ice sheets stole down from Greenland to cover much of North America and northern Europe. At first, these glaciers advanced and retreated on a 41,000-year cycle, driven by changes in the Earth's orbit around the sun, Willeit said.

But between 1.25 million and 0.7 million years ago, these glacial and interglacial cycles stretched out, re-occurring every 100,000 years or so, a phenomenon called the mid-Pleistocene transition because of the epoch in which it occurred. The question, Willeit said, is what caused the transition, given that the pattern of variations in Earth's orbit hadn't changed.

Willeit and his team used an advanced computer simulation of the Quaternary to try to answer that question. Models are only as good as the parameters included, and this one included a lot: atmospheric conditions, ocean conditions, vegetation, global carbon, dust and ice sheets. The researchers included what is known about the parameters and then tweaked them to see what conditions could create the mid-Pleistocene transition.

How things have changed

The team found that for 41,000-year glacial cycles to change to 100,000-year cycles, two things had to happen: Carbon dioxide in the atmosphere had to decline, and glaciers had to scour away a layer of sediment called the regolith. [Images: Greenland's Gorgeous Glaciers]

Get the world’s most fascinating discoveries delivered straight to your inbox.

Carbon dioxide may have declined for different reasons, Willeit said, such as a decrease in the greenhouse gas spewing from volcanoes, or changes in the weathering rate of rocks, which would lead to more carbon becoming locked up in sediments carried to the bottom of the sea. Less carbon in the atmosphere meant less heat being trapped, so the climate would have cooled to the point where large ice sheets could form more easily.

Geologic processes provided the crucial second ingredient for longer glacial cycles. When continents are ice-free for long periods of time, they acquire a top layer of ground-up, unconsolidated rock called regolith. Earth's moon is a good place to see an example today: The moon's thick dust layer is a regolith.

Ice that forms on top of this regolith tends to be less stable than ice that forms on firm bedrock, Willeit said (imagine the difference in stability between a surface made of ball bearings versus that of a flat table top). Similarly, regolith-based ice sheets flow faster and stay thinner than ice does. When changes in the Earth's orbit alter the amount of heat that hits the Earth's surface, the ice sheets are particularly prone to melting.

But glaciers also bulldoze regolith away, pushing the dusty stuff to their glacial edges. This glacial scouring re-exposes the bedrock; after a few glacial cycles in the early Quaternary, the bedrock would have been exposed, giving newly forming ice sheets a firmer place to anchor, Willeit said. These resilient ice sheets, plus a cooler climate, resulted in the longer glacial cycles seen after about a million years ago. Interglacial periods still occurred because of orbital changes, but they became shorter.

Climate then and now

Those findings are important for understanding the conditions that determined whether places like Chicago or New York City are liveable or are covered in a mile of ice. But they're also useful for framing today's climate change, Willeit said. [8 Ways Global Warming Is Already Changing the World]

Records of atmospheric carbon that existed about 800,000 years ago have to be reconstructed rather than measured directly from ice cores, so estimates on the amount of carbon in the atmosphere have varied. Willeit and his team's modeling research suggests that carbon dioxide was below 400 parts per million for the entire Quaternary period. Today, the global average is 405 parts per million and rising.

In the late Pliocene, around 2.5 million years ago, average global temperatures were temporarily about 2.7 degrees Fahrenheit (1.5 degree Celsius) higher than average before the widespread use of fossil fuels, Willeit's model showed. Those ancient temperatures currently hold the record for the highest in the entire Quaternary period.

But that could soon change. Already, the globe is 2.1 degrees F (1.2 degrees C) warmer than the pre-industrial average. The 2016 Paris Agreement would limit warming to 2.7 F (1.4 C), matching the climate of 2.5 million years ago. If the world can't manage that limit and heads toward 3.6 degrees F (2 degrees C), the previous international goal, it will be the hottest global average seen in this geological period.

"Our study puts this into perspective," Willeit said. "It clearly shows that even if you look at past climates over very long timescales, what we are doing now in terms of climate change is something big and very fast, compared to what happened in the past."

The findings will be published today (April 3) in the journal Science Advances.

- The Reality of Climate Change: 10 Myths Busted

- Images of Melt: Earth's Vanishing Ice

- In Photos: The Vanishing Ice of Baffin Island

Originally published on Live Science.

Stephanie Pappas is a contributing writer for Live Science, covering topics ranging from geoscience to archaeology to the human brain and behavior. She was previously a senior writer for Live Science but is now a freelancer based in Denver, Colorado, and regularly contributes to Scientific American and The Monitor, the monthly magazine of the American Psychological Association. Stephanie received a bachelor's degree in psychology from the University of South Carolina and a graduate certificate in science communication from the University of California, Santa Cruz.