The Top 10 Revolutionary Computers

Get the world’s most fascinating discoveries delivered straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Revolutionizing Computing

From the Apple Macintosh with its graphical-user interface to the Xerox PARC Alto, which never hit store shelves, to the U.S. Army's 30-ton Electronic Numerical Integrator And Computer (ENIAC), there is a long history of computers to explore. Here's a look at how modern computing has changed over the years.

IBM Roadrunner

The title of fastest supercomputer has become hard to retain for long, but the current champ is also notable for being the first machine with sustained throughput exceeding a petaflop — more than a quadrillion floating point operations per second. Physically, it's bigger than the ENIAC computer unveiled in 1946, but, if history is any guide, we’ll see equivalent power on a desktop in a few decades.

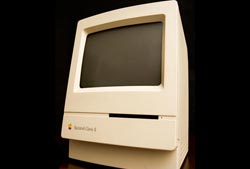

Apple Macintosh

The "computer for the rest of us," the Apple Macintosh, raised the bar for the rest of the personal computer industry when it came out in 1984 by abandoning the command-line interface (as used by the PC's MS-DOS operating system and essentially everyone else) for a graphical user interface. Not only that, it was commercially successful doing so.

Article continues belowIBM PC

It was hardly the fastest or slickest microcomputer when it came out in 1981, but it did have those three initials, and it established hardware and software standards in a market whose growth had previously been stymied by fragmentation among competing architectures. The clone, software, and peripheral markets that sprang up around the PC led directly to today's personal computer ecosystem.

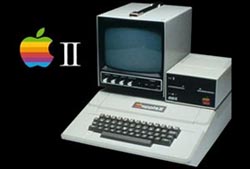

Apple II

Introduced by Apple Computer in 1977 and kept on the market for an unprecedented 15 years, the Apple II demonstrated conclusively that there was a mass personal computer market. Its pioneering use of color graphics also won it wide following in the education market—and disdain in the business market, which saw color as frivolous.

TRS-80

Introduced in 1977, Radio Shack figured its original production run of 3,000 could be used as cash registers if they didn't sell. But sales exceed that projection by a factor of 80. One of the first machines whose documentation was intended for non-geeks, the widespread adoption of the "Trash-80" led to the first third-party mass personal software market. For the first time, non-geeky high-school kids could write programs and make a computer do their bidding.

Xerox PARC Alto

A single-user computer with a graphical interface with windows and icons, a mouse for cursor control, a local hard drive, and an Ethernet connection to the rest of the office and/or world—that probably describes the machine you're using right now. Those features first came together in the Alto, an experimental machine developed at the Xerox Palo Alto Research Center (PARC) in 1974. Xerox, however, never had the nerve to put the Alto on the market.

Get the world’s most fascinating discoveries delivered straight to your inbox.

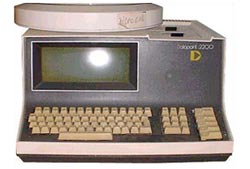

Datapoint 2200

One of the first single-user computers on the market when it came out in 1970 from the now-defunct Computer Terminal Corp., the Datapoint 2200 lives on in every PC today. CTC convinced fellow start-up Intel to reduce the machine's processor to a single chip, to combat system heating. Intel ended up adding the chip to its catalog, founding today's "Intel dynasty" of PC microprocessors. (System heating, alas, remains a problem.)

IBM System/360

With an extensive set of standard peripherals and a range of compatible models at different price points, the S/360 tapped a huge pent-up demand for business computers when IBM brought it out in 1964. Its popularity provided the economic foundation for the modern computer industry.

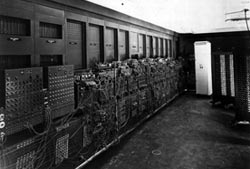

ENIAC

Being programmable and performing 357 multiplication operations per second, the U.S. Army's Electronic Numerical Integrator And Computer (ENIAC) hinted at what a computer could do when it was unveiled in 1946. However, it weighed 30 tons, used 17,478 vacuum tubes, consumed 150 kilowatts, and programming involved patch cables and switches.

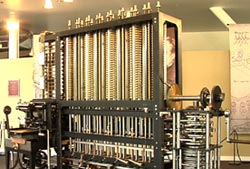

The Difference Engine

Charles Babbage lost his knack for talking money out of the British government, which wanted a machine that could generate math tables, and so the computer revolution didn't begin in England in 1822. (Did you notice the 8 in that date?). Babbage designed an amazing computer, it just did not get built until recently, as replica mechanical computers have since been constructed using Babbage's original, complex blueprints. They work perfectly.

Live Science Plus

Live Science Plus