Is Global Warming a Giant Natural Fluctuation? (Op-Ed)

Shaun Lovejoy is a professor of physics at McGill University and president of the Nonlinear Processes Division of the European Geosciences Union. He contributed this article to Live Science's Expert Voices: Op-Ed & Insights.

Last year, the Quebec Skeptics Society threw down the gauntlet: "If anthropogenic global warming is as strong as scientists claim, then why do they need supercomputers to demonstrate it?" My immediate response was, "They don't." Indeed, in 1896 — before the warming was perceptible — the Swedish scientist Svante Arrhenius, toiling for a year, predicted that doubling carbon dioxide (CO2) levels would increase global temperatures by 5 to 6 degrees Celsius, which turns out to be close to modern estimates.

Yet the skeptics' question resonated: Global Circulation Models (GCM's) dominate climate research to such an extent that (even scientists!) can be forgiven for thinking these computer-driven models are essential. So I took up the challenge, and my answer appears in the research described in a paper recently published in the journal Climate Dynamics.

Article continues belowI started with a basic aspect of the traditional scientific method: No theory ever can be proven beyond "reasonable doubt," and anthropogenic warming is no exception. Climate skeptics have ruthlessly exploited this alleged weakness, stating that the models are wrong, and that the warming is natural. Fortunately, scientists have a fundamental methodological asymmetry to use against these skeptics: A single decisive experiment effectively can disprove a scientific hypothesis.

That's what I claim to have done. Examining the theory that global warming is only natural, I showed — without any use of GCMs — that the probability that warming is simply a giant natural fluctuation is so small as to be negligible.

Here's how I went about it.

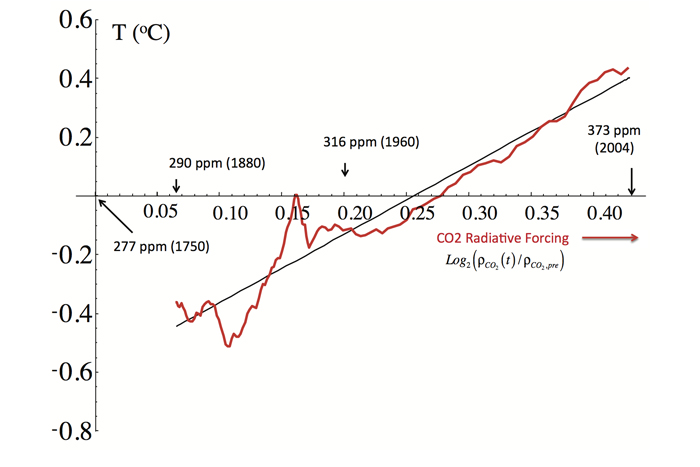

First, my study uses CO2 as a surrogate for all human effects. While it's true that humans have also changed land use and emitted other green house gasses (associated with warming) and aerosols (associated with cooling), these changes are strongly linked to CO2 via global economic activity. To a good approximation, if you double the economy, you double the emissions — and, therefore, you double the effects.

Get the world’s most fascinating discoveries delivered straight to your inbox.

It turns out that the resulting relationship between global temperature and the CO2 proxy is very tight: The proxy predicts with 95 percent certainty that a doubling of CO2 levels in the atmosphere will lead to a warming of 1.9 to 4.2 degrees C. This is close to the GCM-estimated range of 1.5 to 4.5 degrees C, which has been essentially unchanged since a 1979 U.S. National Academy of Sciences report. This new method also estimates that the temperature since 1880 has risen by between 0.76 and 0.98 degrees C, compared to an estimate of 0.65 to 1.05 degrees C cited in tthe International Panel on Climate Change's (IPCC) Fifth Assessment Report (AR5, 2013).

These ranges are so close that they help confirm the method. Beyond that, the differences only serve to fine-tune estimates of the magnitude of the 125-year temperature change.

The key, second part of my study uses data from the year 1500 to estimate the probability that this temperature change is due to natural causes. Since I am interested in rare, extreme fluctuations, a direct estimate would require far more pre-industrial measurements than are currently available. Statisticians regularly deal with this type of problem, usually solving it by applying the bell curve. Using this analysis shows that the chance of the fluctuation being natural would be in the range of one-in-100-thousand to one-in-10-million.

Yet, climate fluctuations are much more extreme than those allowed by the bell curve. This is where my specialty — nonlinear geophysics — comes in.

Nonlinear geophysics confirms that the extremes should be far stronger than the usual "bell curve" allows. Indeed, I showed that giant, century-long fluctuations are more than 100-times more likely than the bell curve would predict. Yet, at one in a thousand, their probability is still small enough to confidently reject them.

But what about Medieval warming with vineyards in Britain, or the so-called Little Ice Age with skating on the Thames? In the historical past, the temperature has changed considerably. Surely, the industrial-epoch warming is just another large amplitude natural event?

Well, no.

My result focuses on the probability of centennial-scale temperature changes. It does not exclude large changes, if they occur slowly enough. So if you must, let the peons roast and the Thames freeze solid, the result stands.

In its AR5 report last September, the IPCC strengthened its earlier 2007 qualification of "likely" to "extremely likely that human influence has been the dominant cause of the observed warming since the mid-20th century." Yet skeptics continue to dismiss the models and insist that warming results from natural variability. The new GCM-free approach rejects natural variability, leaving the last vestige of skepticism in tatters.

Within minutes of the Climate Dynamics study going live, the Internet started buzzing. The great majority of the pickup was professional, with embellishments from various news sites. However, I also received aggressive emails, many from the Watts Up with That? (WUWT) site, which comments on climate change from a skeptic's perspective. The majordomo of this deniers' hub is the notorious Viscount Christopher Monckton of Brenchley, who — within hours — had declared to the faithful that the paper was no less than a "mephitically ectoplasmic emanation from the Forces of Darkness" and that "it is time to be angry at the gruesome failure of peer review."

Beyond the venom, however, the actual criticism amounted to little more than a disbelief in the quantification of error bars on estimates of century-scale global temperatures, even though this estimate was published a year ago and is of little importance to the conclusions.

So, where does this leave things?

Close to my home, it leaves an even greater disconnect between science and policy. The Canadian government has axed climate research (my research was unfunded) and shamelessly promoted the dirtiest fuels. Rather than trying to better understand and protect the country's fragile boreal environment, northern investment has focused on new military installations. The government has reneged on its international climate obligations.

Globally, investment in fossil fuels has far outstripped those in carbon-free and sustainable technologies, and two decades of international discussion have failed to prevent emissions from growing.

The world desperately needs to drop the skepticism and change course. Humanity's future depends on it.

Note: The author has posted a related Q+A about his paper.

Follow all of the Expert Voices issues and debates — and become part of the discussion — on Facebook, Twitter and Google +. The views expressed are those of the author and do not necessarily reflect the views of the publisher. This version of the article was originally published on Live Science.