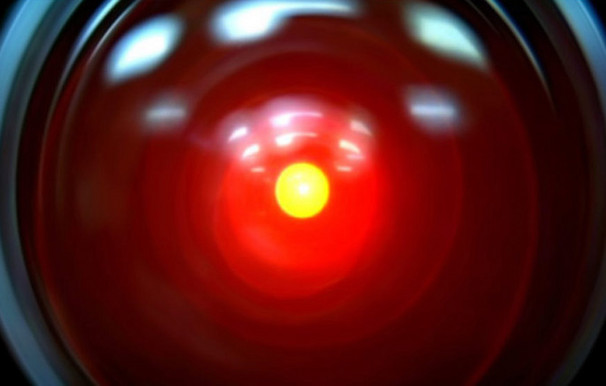

Humanity Must 'Jail' Dangerous AI to Avoid Doom, Expert Says

Get the world’s most fascinating discoveries delivered straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

Super-intelligent computers or robots have threatened humanity's existence more than once in science fiction. Such doomsday scenarios could be prevented if humans can create a virtual prison to contain artificial intelligence before it grows dangerously self-aware.

Keeping the artificial intelligence (AI) genie trapped in the proverbial bottle could turn an apocalyptic threat into a powerful oracle that solves humanity's problems, said Roman Yampolskiy, a computer scientist at the University of Louisville in Kentucky. But successful containment requires careful planning so that a clever AI cannot simply threaten, bribe, seduce or hack its way to freedom.

"It can discover new attack pathways, launch sophisticated social-engineering attacks and re-use existing hardware components in unforeseen ways," Yampolskiy said. "Such software is not limited to infecting computers and networks — it can also attack human psyches, bribe, blackmail and brainwash those who come in contact with it."

Article continues belowA new field of research aimed at solving the AI prison problem could have side benefits for improving cybersecurity and cryptography, Yampolskiy suggested. His proposal was detailed in the March issue of the Journal of Consciousness Studies.

How to trap Skynet

One starting solution might trap the AI inside a "virtual machine" running inside a computer's typical operating system — an existing process that adds security by limiting the AI's access to its host computer's software and hardware. That stops a smart AI from doing things such as sending hidden Morse code messages to human sympathizers by manipulating a computer's cooling fans.

Putting the AI on a computer without Internet access would also prevent any "Skynet" program from taking over the world's defense grids in the style of the "Terminator" films. If all else fails, researchers could always slow down the AI's "thinking" by throttling back computer processing speeds, regularly hit the "reset" button or shut down the computer's power supply to keep an AI in check.

Get the world’s most fascinating discoveries delivered straight to your inbox.

Such security measures treat the AI as an especially smart and dangerous computer virus or malware program, but without the sure knowledge that any of the steps would really work.

"The Catch-22 is that until we have fully developed superintelligent AI we can't fully test our ideas, but in order to safely develop such AI we need to have working security measures," Yampolskiy told InnovationNewsDaily. "Our best bet is to use confinement measures against subhuman AI systems and to update them as needed with increasing capacities of AI."

Never send a human to guard a machine

Even casual conversation with a human guard could allow an AI to use psychological tricks such as befriending or blackmail. The AI might offer to reward a human with perfect health, immortality, or perhaps even bring back dead family and friends. Alternately, it could threaten to do terrible things to the human once it "inevitably" escapes.

The safest approach for communication might only allow the AI to respond in a multiple-choice fashion to help solve specific science or technology problems, Yampolskiy explained. That would harness the power of AI as a super-intelligent oracle.

Despite all the safeguards, many researchers think it's impossible to keep a clever AI locked up forever. A past experiment by Eliezer Yudkowsky, a research fellow at the Singularity Institute for Artificial Intelligence, suggested that mere human-level intelligence could escape from an "AI Box" scenario — even if Yampolskiy pointed out that the test wasn't done in the most scientific way.

Still, Yampolskiy argues strongly for keeping AI bottled up rather than rushing headlong to free our new machine overlords. But if the AI reaches the point where it rises beyond human scientific understanding to deploy powers such as precognition (knowledge of the future), telepathy or psychokinesis, all bets are off.

"If such software manages to self-improve to levels significantly beyond human-level intelligence, the type of damage it can do is truly beyond our ability to predict or fully comprehend," Yampolskiy said.

This story was provided by InnovationNewsDaily, a sister site to Live Science. You can follow InnovationNewsDaily Senior Writer Jeremy Hsu on Twitter @ScienceHsu. Follow InnovationNewsDaily on Twitter @News_Innovation, or on Facebook.

Live Science Plus

Live Science Plus