How Cloud Computing Works (Infographic)

Get the world’s most fascinating discoveries delivered straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

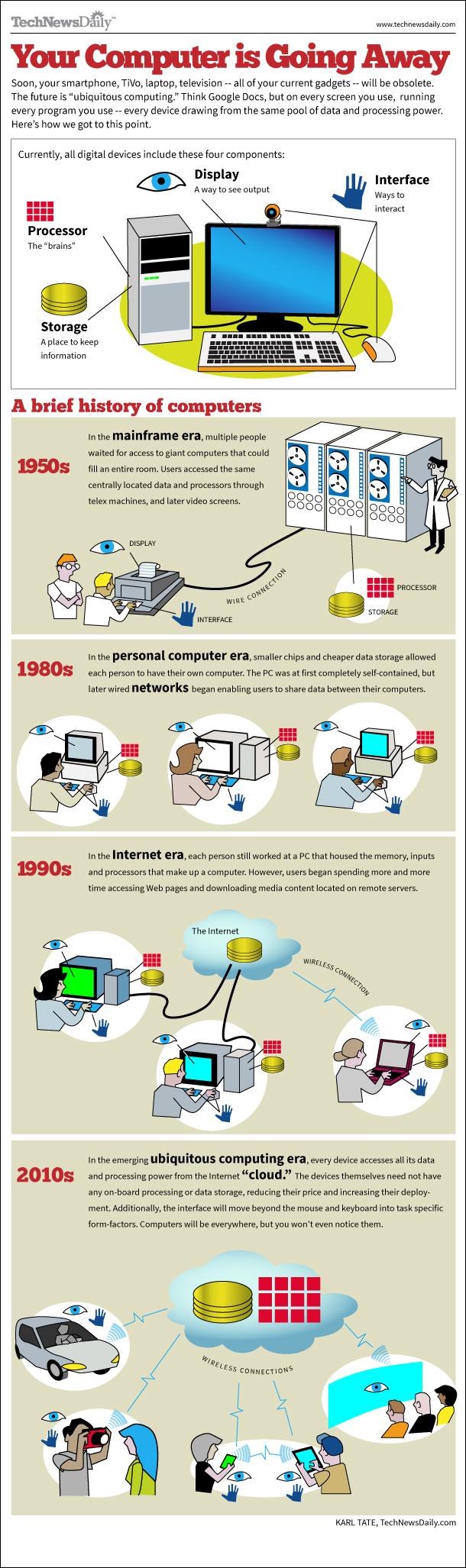

Soon, your smartphone, TiVo, laptop, television -- all of your current gadgets -- will be obsolete. The future is “ubiquitous computing.” Think Google Docs, but on every screen you use, running every program you use -- every device drawing from the same pool of data and processing power. Here’s how we got to this point.

Related: History of computers: A brief timeline

Article continues below

In the mainframe era, multiple people waited for access to giant computers that could fill an entire room. Users accessed the same centrally located data and processors through telex machines, and later video screens.

In the personal computer era, smaller chips and cheaper data storage allowed each person to have their own computer. The PC was at first completely self-contained, but later wired networks began enabling users to share data between their computers.

Get the world’s most fascinating discoveries delivered straight to your inbox.

In the Internet era, each person still worked at a PC that housed the memory, inputs and processors that make up a computer. However, users began spending more and more time accessing Web pages and downloading media content located on remote servers.

In the emerging ubiquitous computing era, every device accesses all its data and processing power from the Internet “cloud.” The devices themselves need not have any on-board processing or data storage, reducing their price and increasing their deployment. Additionally, the interface will move beyond the mouse and keyboard into task specific form-factors. Computers will be everywhere, but you won't even notice them.

Live Science Plus

Live Science Plus