9 Super-Cool Uses for Supercomputers

Supercomputers are the bodybuilders of the computer world. They boast tens of thousands of times the computing power of a desktop and cost tens of millions of dollars. They fill enormous rooms, which are chilled to prevent their thousands of microprocessor cores from overheating. And they perform trillions, or even thousands of trillions, of calculations per second.

All of that power means supercomputers are perfect for tackling big scientific problems, from uncovering the origins of the universe to delving into the patterns of protein folding that make life possible. Here are some of the most intriguing questions being tackled by supercomputers today.

Recreating the Big Bang

It takes big computers to look into the biggest question of all: What is the origin of the universe?

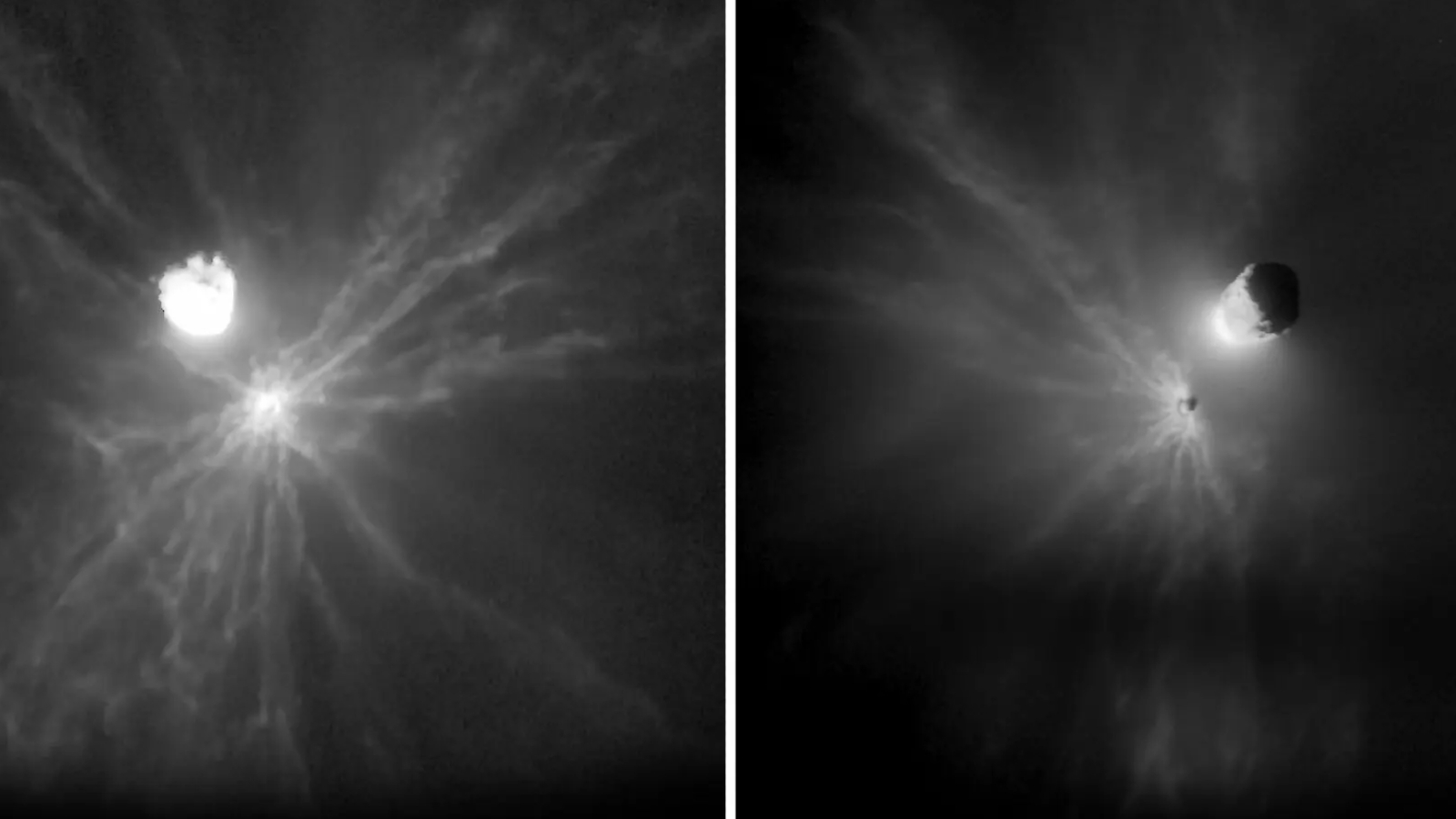

The "Big Bang," or the initial expansion of all energy and matter in the universe, happened more than 13 billion years ago in trillion-degree Celsius temperatures, but supercomputer simulations make it possible to observe what went on during the universe's birth. Researchers at the Texas Advanced Computing Center (TACC) at the University of Texas in Austin have also used supercomputers to simulate the formation of the first galaxy, while scientists at NASA’s Ames Research Center in Mountain View, Calif., have simulated the creation of stars from cosmic dust and gas.

Supercomputer simulations also make it possible for physicists to answer questions about the unseen universe of today. Invisible dark matter makes up about 25 percent of the universe, and dark energy makes up more than 70 percent, but physicists know little about either. Using powerful supercomputers like IBM's Roadrunner at Los Alamos National Laboratory, researchers can run models that require upward of a thousand trillion calculations per second, allowing for the most realistic models of these cosmic mysteries yet.

Understanding earthquakes

Get the world’s most fascinating discoveries delivered straight to your inbox.

Other supercomputer simulations hit closer to home. By modeling the three-dimensional structure of the Earth, researchers can predict how earthquake waves will travel both locally and globally. It's a problem that seemed intractable two decades ago, says Princeton geophysicist Jeroen Tromp. But by using supercomputers, scientists can solve very complex equations that mirror real life.

"We can basically say, if this is your best model of what the earth looks like in a 3-D sense, this is what the waves look like," Tromp said.

By comparing any remaining differences between simulations and real data, Tromp and his team are perfecting their images of the earth's interior. The resulting techniques can be used to map the subsurface for oil exploration or carbon sequestration, and can help researchers understand the processes occurring deep in the Earth's mantle and core.

Folding Proteins

In 1999, IBM announced plans to build the fastest supercomputer the world had ever seen. The first challenge for this technological marvel, dubbed "Blue Gene"?

Unraveling the mysteries of protein folding.

Proteins are made of long strands of amino acids folded into complex three-dimensional shapes. Their function is driven by their form. When a protein misfolds, there can be serious consequences, including disorders like cystic fibrosis, Mad Cow disease and Alzheimer's disease. Finding out how proteins fold — and how folding can go wrong — could be the first step in curing these diseases.

Blue Gene isn't the only supercomputer to work on this problem, which requires massive amounts of power to simulate mere microseconds of folding time. Using simulations, researchers have uncovered the folding strategies of several proteins, including one found in the lining of the mammalian gut. Meanwhile, the Blue Gene project has expanded. As of November 2009, a Blue Gene system in Germany is ranked as the fourth-most powerful supercomputer in the world, with a maximum processing speed of a thousand trillion calculations per second.

Mapping the blood stream

Think you have a pretty good idea of how your blood flows? Think again. The total length of all of the veins, arteries and capillaries in the human body is between 60,000 and 100,000 miles. To map blood flow through this complex system in real time, Brown University professor of applied mathematics George Karniadakis works with multiple laboratories and multiple computer clusters.

In a 2009 paper in the journal Philosophical Transactions of the Royal Society, Karniadakas and his team describe the flow of blood through the brain of a typical person compared with blood flow in the brain of a person with hydrocephalus, a condition in which cranial fluid builds up inside the skull. The results could help researchers better understand strokes, traumatic brain injury and other vascular brain diseases, the authors write.

Modeling swine flu

Potential pandemics like the H1N1 swine flu require a fast response on two fronts: First, researchers have to figure out how the virus is spreading. Second, they have to find drugs to stop it.

Supercomputers can help with both. During the recent H1N1 outbreak, researchers at Virginia Polytechnic Institute and State University in Blacksburg, Va., used an advanced model of disease spread called EpiSimdemics to predict the transmission of the flu. The program, which is designed to model populations up to 300 million strong, was used by the U.S. Department of Defense during the outbreak, according to a May 2009 report in IEEE Spectrum magazine.

Meanwhile, researchers at the University of Illinois at Urbana-Champagne and the University of Utah were using supercomputers to peer into the virus itself. Using the Ranger supercomputer at the TACC in Austin, Texas, the scientists unraveled the structure of swine flu. They figured out how drugs would bind to the virus and simulated the mutations that might lead to drug resistance. The results showed that the virus was not yet resistant, but would be soon, according to a report by the TeraGrid computing resources center. Such simulations can help doctors prescribe drugs that won't promote resistance.

Testing nuclear weapons

Since 1992, the United States has banned the testing of nuclear weapons. But that doesn't mean the nuclear arsenal is out of date.

The Stockpile Stewardship program uses non-nuclear lab tests and, yes, computer simulations to ensure that the country's cache of nuclear weapons are functional and safe. In 2012, IBM plans to unveil a new supercomputer, Sequoia, at Lawrence Livermore National Laboratory in California. According to IBM, Sequoia will be a 20 petaflop machine, meaning it will be capable of performing twenty thousand trillion calculations each second. Sequoia's prime directive is to create better simulations of nuclear explosions and to do away with real-world nuke testing for good.

Forecasting hurricanes

With Hurricane Ike bearing down on the Gulf Coast in 2008, forecasters turned to Ranger for clues about the storm's path. This supercomputer, with its cowboy moniker and 579 trillion calculations per second processing power, resides at the TACC in Austin, Texas. Using data directly from National Oceanographic and Atmospheric Agency airplanes, Ranger calculated likely paths for the storm. According to a TACC report, Ranger improved the five-day hurricane forecast by 15 percent.

Simulations are also useful after a storm. When Hurricane Rita hit Texas in 2005, Los Alamos National Laboratory in New Mexico lent manpower and computer power to model vulnerable electrical lines and power stations, helping officials make decisions about evacuation, power shutoff and repairs.

Predicting climate change

The challenge of predicting global climate is immense. There are hundreds of variables, from the reflectivity of the earth's surface (high for icy spots, low for dark forests) to the vagaries of ocean currents. Dealing with these variables requires supercomputing capabilities. Computer power is so coveted by climate scientists that the U.S. Department of Energy gives out access to its most powerful machines as a prize.

The resulting simulations both map out the past and look into the future. Models of the ancient past can be matched with fossil data to check for reliability, making future predictions stronger. New variables, such as the effect of cloud cover on climate, can be explored. One model, created in 2008 at Brookhaven National Laboratory in New York, mapped the aerosol particles and turbulence of clouds to a resolution of 30 square feet. These maps will have to become much more detailed before researchers truly understand how clouds affect climate over time.

Building brains

So how do supercomputers stack up to human brains? Well, they're really good at computation: It would take 120 billion people with 120 billion calculators 50 years to do what the Sequoia supercomputer will be able to do in a day. But when it comes to the brain's ability to process information in parallel by doing many calculations simultaneously, even supercomputers lag behind. Dawn, a supercomputer at Lawrence Livermore National Laboratory, can simulate the brain power of a cat — but 100 to 1,000 times slower than a real cat brain.

Nonetheless, supercomputers are useful for modeling the nervous system. In 2006, researchers at the École Polytechnique Fédérale de Lausanne in Switzerland successfully simulated a 10,000-neuron chunk of a rat brain called a neocortical unit. With enough of these units, the scientists on this so-called "Blue Brain" project hope to eventually build a complete model of the human brain.

The brain would not be an artificial intelligence system, but rather a working neural circuit that researchers could use to understand brain function and test virtual psychiatric treatments. But Blue Brain could be even better than artificial intelligence, lead researcher Henry Markram told The Guardian newspaper in 2007: "If we build it right, it should speak."

Stephanie Pappas is a contributing writer for Live Science, covering topics ranging from geoscience to archaeology to the human brain and behavior. She was previously a senior writer for Live Science but is now a freelancer based in Denver, Colorado, and regularly contributes to Scientific American and The Monitor, the monthly magazine of the American Psychological Association. Stephanie received a bachelor's degree in psychology from the University of South Carolina and a graduate certificate in science communication from the University of California, Santa Cruz.