Science Fiction or Fact: Humanlike Intelligent Machines Will Soon Exist

Get the world’s most fascinating discoveries delivered straight to your inbox.

You are now subscribed

Your newsletter sign-up was successful

Want to add more newsletters?

Join the club

Get full access to premium articles, exclusive features and a growing list of member rewards.

In this weekly series, Life's Little Mysteries rates the plausibility of popular science fiction concepts.

In many futuristic tales, our heroic protagonists are often helped — and sometimes harmed — by intelligent machines far more clever than an iPhone. These computers sometimes walk and talk among us. Quick-witted machines serve on spaceships like Lieutenant Commander Data on "Star Trek: The Next Generation," or in our homes like the wisecracking housemaid Rosie the Robot on "The Jetsons."

Now, artificial intelligence research has quite a ways to go before these visions are realized. Perhaps the closest domestic robot we have to Rosie is the Roomba, that couch-bumping disk of an automated vacuum cleaner.

Article continues belowRobots and computers have already proved far more reliable and proficient than humans at specific tasks, such as assembly-line work or crunching numbers. Yet machines cannot handle a range of activities that strike us as basic, such as tying a shoe while holding a conversation.

"What we have learned so far from 50 to 60 years of AI research is that surpassing human intelligence in a very narrow area or maybe even in a task-oriented way — like playing a particular game — as sophisticated as it may be, is a lot easier than creating machines that have what we call the 'common sense' of a 3-year-old child," said Shlomo Zilberstein, a professor of computer science at the University of Massachusetts.

Given the pace of progress, however, many scientists believe highly intelligent machines will be available in the coming decades. But it is less clear when (or if) computers will achieve human-like "sentience," in terms of self-interest and free will — a premise very much at the heart of many sci-fi stories.

Ever more human

Get the world’s most fascinating discoveries delivered straight to your inbox.

A motivating force behind designing computers with humanlike AI will be to make our interactions with them more natural. "I think the argument for building computers that look and behave like people is very strong," Zilberstein said. [Top 10 Inventions that Changed the World]

We currently interact with our household and office technologies using touch screens, voice commands and remote controls. Engineers want to do better. "One of the areas where you will see a lot of improvement is that you're going to interact with your gadgets, such as your TV soon, by talking to them and performing certain gestures," Zilberstein said.

After all, that is how human beings exchange information. We use "natural language" full of idioms, cultural references and inflections that infuse far richer meaning into our words than their literal definitions. (For example, when we use sarcasm, duh.)

Humans color their spoken words with facial expressions and body language, too. "It's just easier for people to interact that way," Zilberstein said. All of this has long befuddled computers.

Hear me now?

On the language side of things, though, a few recent advancements have made some waves. IBM's Watson computer last year smoked its human competition in "Jeopardy!", a game full of wordplay and sly references.

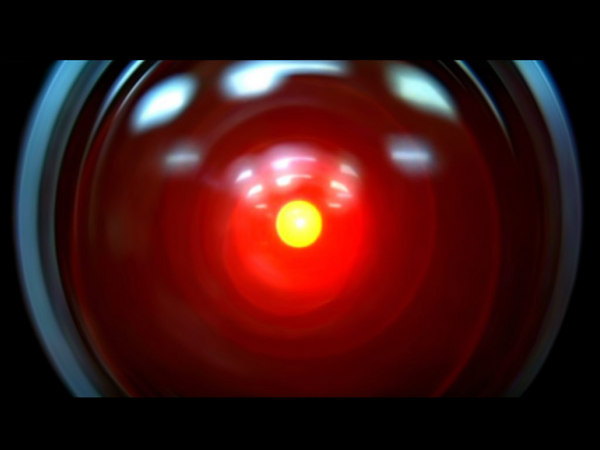

More recently, Apple unveiled its Siri personal assistant on the iPhone 4S. The software also understands an impressive range of natural language inputs and has a number of clever, programmed retorts at its disposal. (Siri has certain eerie parallels with the HAL 9000 computer in "2001: A Space Odyssey.")

In order to understand the full spectrum of human communications, though, machines will need to see as well as hear us. And they will need to speak back in a similar manner to get everything straight, "which is a lot more efficient than reading a whole block of text from the computer," Zilberstein said.

Comprehendingly conversant

A famous metric of a machine's relative intelligence is the Turing test, proposed in 1950. To pass the test, a computer must convince a human for an arbitrary period of time that a conversation between the two is with another human, not a machine.

So-called chatterbots have performed fairly well in this department by exploiting the human tendency to anthropomorphize, or to ascribe agency and intelligence where it, in fact, does not exist.

With machines, "we can fake [human interaction] in a way that's surprisingly effective," said Bart Massey, a computer scientist at Portland State University in Oregon. "We can already make an interactive fiction, giving [a computer] catchphrases and a particular attitude of narrated speech. The vast human capacity to anthropomorphize stuff makes it easy to cheat."

Continued advancements of voice-activated menus and programs will render computers impressively "smarter" yet. "Those machines will develop more and you're starting to see things like Siri that have more and more of a simulated personality," Massey said. "We will end up with systems that at the surface level will feel very intelligent."

Some AI folks think that greater computing power and ever-cleverer algorithms will eventually be able to match our brain's outputs. After all, the number of calculations machines can perform in a given amount of time and attendant abilities this processing allows for has grown by staggering amounts since the dawn of computing. [How Do Calculators Calculate?]

But not all in the field are convinced humanlike intelligence can be replicated in code. "I'm not one of the people who believe that if we just make [computers] massively faster and more parallelized and with more storage, if we scale it up enough, that free will and emotions will emerge automatically in some way," Zilberstein said. "There's still some gap we don’t fully understand and certainly cannot design and engineer at this point."

Too smart for their own good

To a large extent, roboticists will probably try to avoid emergent properties of consciousness anyway. A key reason: utility. A household robot like Rosie does not need "personhood" and emotions in order for "her" to do a good job; in fact, sentience might well get in the way.

"No one wants Rosie to be able to compose poetry or have emotional breakdowns with the loss of a limb," Massey said. "You want the robot to clean."

He continued: "The ethical concerns that truly self-aware robots raise alone are worthy of serious consideration. Even if we knew how to make them, there would be a huge debate about whether this was okay and about how we'd have to treat them."

So while the machines around us will keep getting brighter and "like" us, it could be a long time before the 'bots possess sentience and self-motivation.

Even when they do, we might not recognize it. "There's a saying that one definition of AI is stuff that computers can't do yet," Massey said. "Just as humans have a tendency to anthropomorphize, we also have tendency to deify ourselves. Every time a computer achieves the ability to do something we say it must not be smart."

Plausibility score: Machines are well on their way to becoming extremely capable and intelligent as judged by human standards. Because it seems most likely that the only reason computers and robots may not match our particular mental framework someday is societal discretion, we give intelligent machines 4 out of 4 Rocketboys.

This story was provided by Life's Little Mysteries, a sister site to LiveScience.

Live Science Plus

Live Science Plus