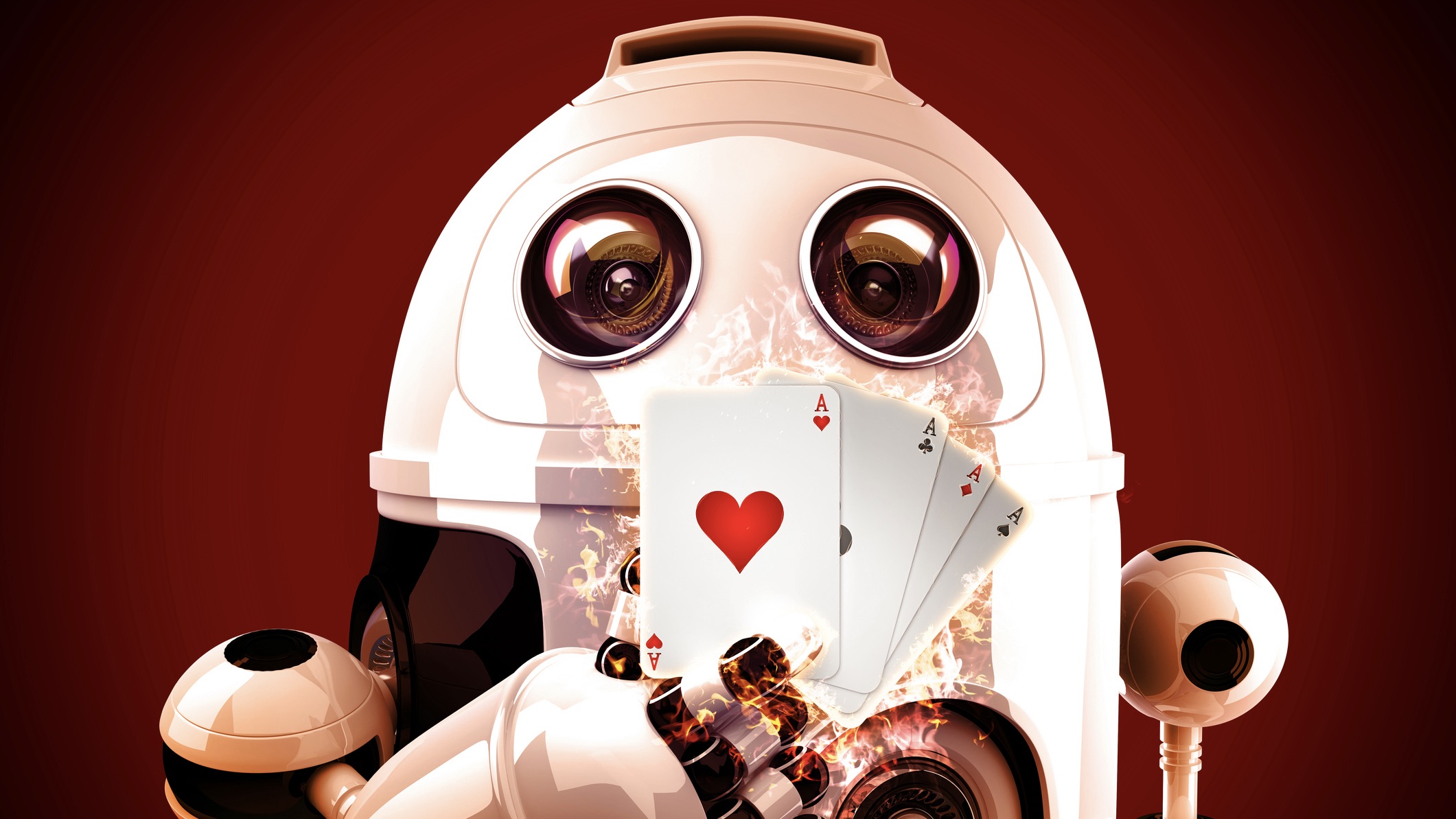

'Student of Games' is the 1st AI that can master different types of games, like chess and poker

AI programs usually master either information-perfect games like chess or information-imperfect games like poker, but "Student of Games" is a general algorithm that can master both types.

"Student of Games" can master both information-perfect games like Go and information-imperfect games like Scotland Yard.

Researchers have built the first general-purpose artificial intelligence (AI) algorithm that can master a wide variety of games — dubbed "Student of Games."

Game algorithms are normally designed to master either information-perfect games like Go or chess — in which each player has all the information — or information-imperfect games like poker, in which some information is hidden from other players. This is because the process of training the algorithms has historically been different for the two types of games: The former uses search and learning while the latter uses game-theoretic reasoning and learning.

But the new Student of Games algorithm gets around this limitation by combining guided search, self-play learning and game-theoretic reasoning, according to a new paper describing the algorithm, published Nov. 15 in the journal Science Advances.

Related link: AI is transforming every aspect of science. Here's how.

When tested, Student of Games held its own in both the information-perfect chess and Go, as well as the information-imperfect Texas Hold'em and Scotland Yard. However, it couldn't quite beat the best, specialized AI algorithms in head-to-head matchups.

"This is a step towards making even more general algorithms," study lead author Martin Schmid, CEO and co-founder of EquiLibre Technologies, told Live Science in an email.

"One takeaway is that one can indeed design a technique that can work for both perfect and imperfect information games, rather than having specialized algorithms. Another interesting observation was that one of the important steps was to come up with a new formalism, allowing for truly general design of search based algorithm."

Get the world’s most fascinating discoveries delivered straight to your inbox.

Games have long served as a benchmark for progress in the field of AI. For instance, in 2016, DeepMind's AlphaGo beat a professional human Go player. The following year, the Libratus system beat the world's best human poker players in a 20-day Texas Hold'em tournament.

"Games are a well-defined benchmark, and there is a long history of AI progress being tied to milestones in AI for games," Schmid explained. "Games are sometimes referred to as fruit flies of AI, allowing for quick development and gradual progress."

But there has always been a divide between information-perfect and imperfect games. To get around this, the team trained its general-purpose algorithm using what's known as a growing-tree counterfactual regret minimization (GT-CFR) algorithm, a variation of a widely used algorithm in which an AI system learns by playing against itself repeatedly.

The team combined techniques used to build a variety of game-playing algorithms, from AlphaZero — a more advanced version of AlphaGo — to DeepStack — the first computer program to outplay human professionals in Texas Hold'em poker.

In the information-perfect category, the team found that Student of Games performed as well as human experts or professionals, but it was substantially weaker in head-to-head play than specialized algorithms like AlphaZero.

RELATED STORIES

It did, however, beat the Texas Hold'em algorithm Slumbot, which the researchers claim is the best openly available poker agent, while also besting an unnamed state-of-the-art agent in Scotland Yard.

However, Student of Games would fall flat in complex games in which there's much more hidden information kept from participating players than in poker, study co-author Finbarr Timbers, a researcher at Midjourney, told Live Science in an email.

For example, in no-limits Hold'em, there are 1,326 possible opening hand combinations players may encounter. "Games like Starcraft or Stratego, which both have a much, much bigger list of possible private information that each player could have, would be infeasible for SoG to play," Timbers said.

In the future, the researchers plan to address explore limitations they encountered, particularly how to reduce the high costs and computational power involved in running Student of Games and achieving strong performance.

Keumars is the technology editor at Live Science. He has written for a variety of publications including ITPro, The Week Digital, ComputerActive, The Independent, The Observer, Metro and TechRadar Pro. He has worked as a technology journalist for more than five years, having previously held the role of features editor with ITPro. He is an NCTJ-qualified journalist and has a degree in biomedical sciences from Queen Mary, University of London. He's also registered as a foundational chartered manager with the Chartered Management Institute (CMI), having qualified as a Level 3 Team leader with distinction in 2023.