Robots to Spin Your Emotions into Music

One could argue that most songs are spun out of human emotions. Erin Gee wants to take that music-making process in a more literal direction.

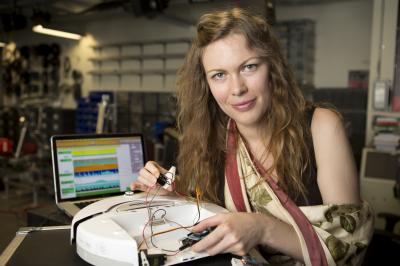

Borrowing some techniques from neuroscience, the Montreal-based artist is working to create robots that can translate our emotions into song.

"It will be like seeing someone expertly playing their emotions, as one would play a cello," Gee said in a statement.

She proposes that very fine microelectrode needles could be inserted into a peripheral nerve to record signals sent directly from the brain through the nervous system. This would provide an electronic picture of that person's emotions. Gee is currently creating software that would convert those electronic signals into instructions for her music-creating robots, which she says will sound like the glockenspiel.

But you might have to wait until next year to hear what happiness, grief or embarrassment sounds like. Gee, who is pursuing an MFA at Concordia University, plans to debut her robots next fall through the Montreal organization Innovations en Concert. She says actors will fuel the music by conjuring up different emotions.

"Each performance will be truly unique," Gee said. "Our specialized musical instruments will allow the emotional state of performers to drive the musical composition."

Follow LiveScience on Twitter @livescience. We're also on Facebook & Google+.

Get the world’s most fascinating discoveries delivered straight to your inbox.

Live Science Plus

Live Science Plus