Do Facts Matter Anymore in Public Policy? (Op-Ed)

Jeff Nesbit was the director of public affairs for two prominent federal science agencies. This article was adapted from one that first appeared in U.S. News & World Report. Nesbit contributed the article to LiveScience's Expert Voices: Op-Ed & Insights.

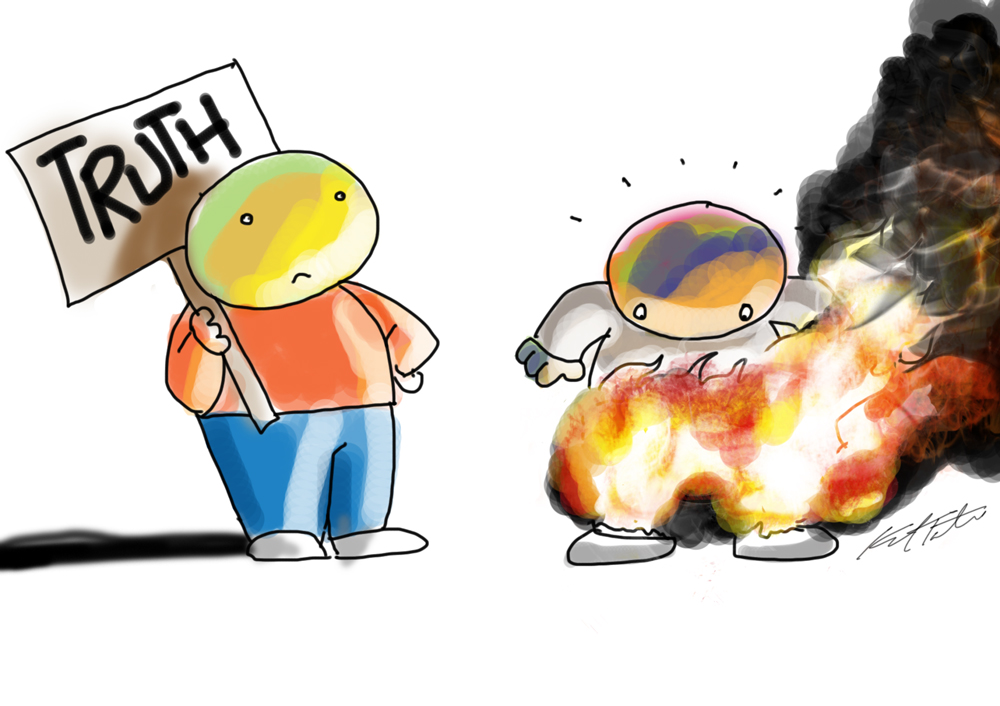

We may be entering a new political era — where objective science, evidence and facts no longer matter all that much in public policy debates. New research shows that people will even attempt to solve math problems differently if their political ideology is at stake.

In the latest contribution to the social science debate between two competing theories — "deficit model" and "cultural cognition" — the latest round clearly tilts the playing field towards the theory that pretty much everything is driven by our innate cultural beliefs rather than objective science, facts or evidence.

Journalists, by and large, believe that a well-informed, fact-based society will make sound democratic choices. That's the guts of the deficit model — that if the public only had better, factual information at their disposal, they'd make the right choices.

President Barack Obama and his national security staff, for instance, are betting a great deal on this social-science theory right now as they bring to light the facts surrounding the Syrian government's use of chemical weapons against its own people. The White House believes more facts about Syria's chemical weapons use will lead to greater public and congressional support for military action, should diplomatic efforts with the help of the Russians not pan out. Once the public knows the truth about Syria's use of chemical weapons against its people, it will support military action regardless of political philosophy, the White House believes.

In other areas, the deficit information model says that if people read nutrition labels properly, they won't make poor food choices; if they learn the dangers of cigarettes and nicotine addiction, they'll stop smoking; if they learn that there aren't any meaningful genetic differences between races, then racism will disappear; or if they learn that 97 percent of climate scientists have reached a consensus that climate change is real and man-made, the political debate about the science itself will end.

Not so fast, say researchers in this latest paper that describes a novel way to test the "cultural cognition" concept — the social-science theory that people act more on their beliefs, even when presented with an objective set of irrefutable facts.

It turns out that people do act and make decisions based on their political beliefs — and that this tendency can be so profound that it affects the way they perform even the most basic, objective tasks like adding or subtracting.

In a recent, sobering study funded by the National Science Foundation through the Cultural Cognition Laboratory at Yale University, researchers found that even people with quite good math skills ended up flunking an objective math problem simply because it went against their political beliefs.

In other words, two plus two equals four — unless your beliefs lead you to calculate this differently so that you end up with a math answer more to your liking.

People "were more likely to correctly identify the result most supported by the data when doing so affirmed the position one would expect them to be politically predisposed to accept … than when the correct interpretation of the data threatened or disappointed their predispositions," researchers Dan Kahan of Yale University, Ellen Peters of Ohio State University, Erica Cantrell Dawson of Cornell University, and Paul Slovic of the University of Oregon wrote in a paper submitted to the Social Science Research Network.

"The reason that citizens remain divided over risks in the face of compelling and widely accessible scientific evidence, this account suggests, is not that they are insufficiently rational," they wrote. "It is that they are too rational in extracting from information on these issues the evidence that matters most for them in their everyday lives."

The study, at the outset, asked more than a 1,000 people to identify both their political beliefs as well as their math skills. The study's participants were then asked to solve a difficult problem designed to interpret the results of an imaginary scientific study. But there were two very different descriptions of what this fake study assessed, which the researchers specifically designed to test how people handled the problem based on their political beliefs. Some participants were told that the study simply measured the effectiveness of a new skin-rash treatment, but others were told that the fake scientific study was assessing a gun-control ban.

That's where things got interesting. As expected, people with better math and reasoning skills did better on the skin rash problem than those with lesser skills.

But when presented with the exact, same problem — but framed as part of a gun control assessment — things went off the rails. Political beliefs about a gun ban affected answers and reasoning ability.

Basically, people with both liberal and conservative political beliefs responded much differently to the same problem — depending on whether they thought the study was designed to assess skin rashes or the highly politicized issue of a right to bear arms.

What's more, for both political groups, people with greater math and numerical reasoning skills actually skewed their results more than those with lesser abilities depending on what they felt the fake study was assessing. Being smarter about something made it more likely that you would allow your political beliefs to harm your objective reasoning skills.

None of this is good — because it means that objective sets of facts, science and evidence are becoming increasingly less relevant in today's society, while the "tribes" you belong to and their leaders may be vastly more important to what you think and how you act.

Where it can become dangerous is when the leaders that you trust for road maps simply lie or deceive for their own purposes, because more and more, this research implies, there is a tendency to simply ignore an objective set of facts if it goes against your beliefs and what people you trust are telling you.

This sort of "cultural cognition" model has profound implications for all sorts of things. In the Syrian situation, for instance, it may not matter whether Syria used chemical weapons against its own people. What may matter more to you is whether you believe Barack Obama when he presents that objective set of facts. Facts don't matter. Who describes them to you does.

A version of this column appeared as "Do Facts Matter Anymore in Public Policy?" in U.S. News & World Report. His most recent Op-Ed was "Will the Printed Word Survive in the Age of the Internet?" The views expressed are those of the author and do not necessarily reflect the views of the publisher.

Get the world’s most fascinating discoveries delivered straight to your inbox.