Smiley Like You Mean It: How Emoticons Get in Your Head (Op-Ed)

This article was originally published at The Conversation. The publication contributed the article to Live Science's Expert Voices: Op-Ed & Insights.

We may not spend a lot of time thinking about the emoticons we insert into our emails and text messages, but it turns out that they reveal something interesting about the way we perceive facial expressions.

In a new paper published today in Social Neuroscience, me and my colleagues at Flinders University and the University of South Australia investigated the neural processes involved in turning three punctuation points into a smiling face.

This shorthand form of expressing emotional states is, of course, a relatively recent invention. In the past, communicating such things sometimes required a little more complexity.

From Proust to instant messaging

In 1913, Marcel Proust started publishing what would become In Search of Lost Time. By the time the last volume was published in 1927, the work spanned 4,211 pages of text. A century later, Proust’s prose is regarded as a one of the greatest examples of writing about human emotion. Yet who, in 2014, has that kind of time?

In the 21st century, writing on the screen places an emphasis on efficiency over accuracy. One example of this is the creation and mainstream acceptance of the emoticon “:-)” to indicate a happy or smiling demeanour.

The smiley face emoticon was first placed in a post to the Carnegie Mellon University computer science general board by Professor Scott E. Fahlman in 1982.

Get the world’s most fascinating discoveries delivered straight to your inbox.

Fahlman initially intended the symbol to alert readers to the fact that the preceding statement should induce a smile rather than be taken seriously (it seems that satire already had a ubiquitous presence on the pre-internet). The emoticon, and variations on it, have since become commonplace in screen-based writing.

Reading emoticons

The frequency with which emoticons are used suggests that they are readily and accurately perceived as a smiling face by their creators and recipients, but the process through which this recognition takes place is unclear.

The physiognomic features that are used to create the impression of a face are actually typographic symbols — on their own, they carry no meaning as a pair of eyes, a nose or a mouth. Indeed, removed from their configuration as a face, each of the symbols reverts to its specific meaning for the punctuation of the surrounding text.

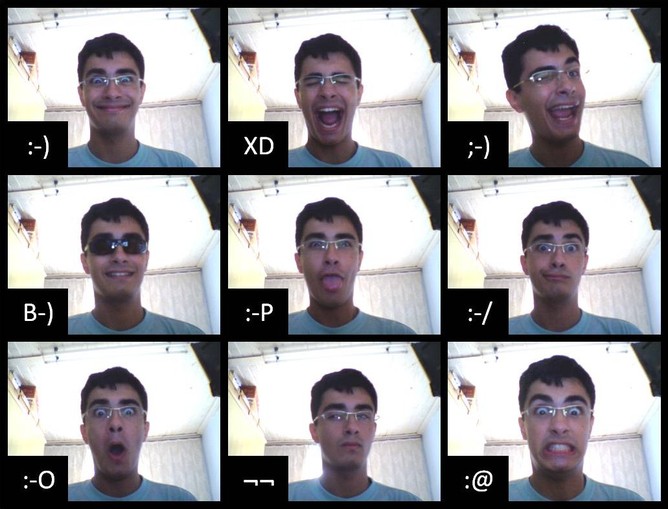

In our study, we recorded the electrical activity in brains of young adults while they watched images of emoticons and actual smiling faces.

Much work has been done previously to investigate the neural systems involved in the perception of faces, and one of the most reliable findings is that faces are processed differently when they are presented upside down.

Faces ain’t faces

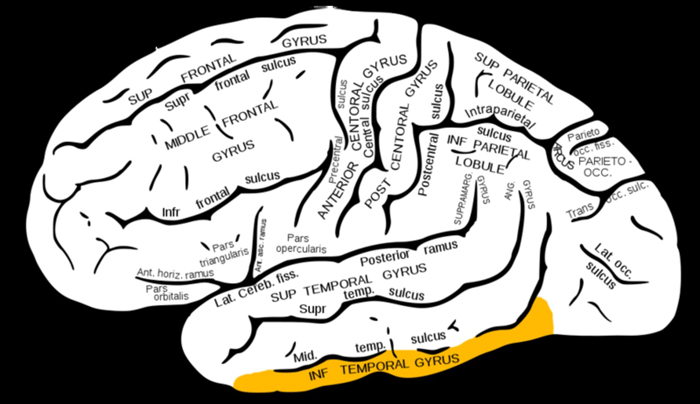

Upright, faces are perceived primarily due to their configuration — that is, the canonical arrangement of two eyes above a nose which is above a mouth — which is driven by regions of the brain in the occipito-temporal cortex.

But when faces are turned upside down, this arrangement is disrupted — and the perception of the face is driven by the processing of the individual features of eyes, nose and mouth. Neurobiologically speaking, this relies on more lateral brain regions in the posterior upper bank of the occipito-temporal sulcus and in the inferior temporal gyrus.

This difference in processing creates a characteristic “inversion effect” on the electrical activity recorded from the brain.

Our experiment replicated this effect for faces. However, emoticons did not produce this change in electrical potential due to inversion, suggesting that the feature processing regions in the inferior temporal gyrus were not activated when upside-down emoticons were presented.

This shows that emoticons are perceived as faces only through configural processes in the occipito-temporal cortex. When that configuration is disrupted (through a process such as inversion), the emoticon no longer carries its meaning as a face. Since the features of emoticons are not eyes and noses and mouths, the feature processing regions of the brain do not act to pull the figure into the precept of a face.

Phonograms and logographs

Written English is based on phonograms, so the semantic meaning associated with the symbol must be decoded through an understanding of the speech sounds indicated by the characters.

However, some of the characters used to write in logosyllabic languages, such as Chinese, readily suggest their semantic meaning through their visual form. Therefore, it is understandable that in people familiar with such scripts, logographs evoke a similar — though not identical — brain electrical potential to faces.

Emoticons, like logographs, are readily understandable through their visual form, and so represent a new way of communicating in written English.

Proust’s attempt to convey the specifics of emotional experience was an amazing achievement. This is at least in part due to his insistence on finding original ways of describing familiar feelings.

Indeed, one of Proust’s greatest current proselytisers, Alain de Botton, points out that cliché is always absent from Proust’s work. Proust knew that one moment of happiness was different to another. And, he knew that it would take time to understand the unique characters of happiness across our lives.

The emoticon is quick to write and, it seems, quick to perceive as a smiling face. Perhaps, though, it is worth our time to occasionally write more.

Owen Churches does not work for, consult to, own shares in or receive funding from any company or organisation that would benefit from this article, and has no relevant affiliations.

This article was originally published on The Conversation. Read the original article. The views expressed are those of the author and do not necessarily reflect the views of the publisher. This version of the article was originally published on Live Science.

Do you think Oscar speech tears are real?

Live Science Plus

Live Science Plus