New 3-D Sound System to be Better than Stereo

When you put on a set of earphones, you hear the Rolling Stones or Yo-Yo Ma as if they were right between your ears.

What if you could put those artists out in front of you?

This is the goal of binaural sound, which takes into account the shape of your ears and your head to transform a recording into a three-dimensional listening experience.

"The idea is to make the ear drums move exactly as they would if they were there live," said Tony Tew of the University of York.

Bouncing sound

More than stereo and surround sound - which try to replicate "being there" by using several speakers emitting separate tracks - binaural sound filters a recording by the path that sound waves travel to your ear drums, bouncing off your head and torso then funneling down your outer ear, or pinnae.

Because its two tracks are specific to each ear, binaural audio must employ earphones.

Sign up for the Live Science daily newsletter now

Get the world’s most fascinating discoveries delivered straight to your inbox.

The idea of binaural recordings has been around almost as long as the phonograph, but it was never individualized to a particular person's features. Instead, it was set up for a sort of average head.

"It effectively meant we were listening through another person's ears," Tew told LiveScience.

Tew and his colleagues are working on a way to have a person step into a small booth and come out a few minutes later with a reading of his or her binaural "signature." This information would plug into a next generation audio player, allowing listeners to hear effectively through their own ears.

Spatial filters

The mathematical form of the binaural signature is called a head-related transfer function (HRTF), but "since that is such a mouthful, we call it a spatial filter," Tew said.

The filter modifies a recording by basically altering the time delay, volume, and frequency response - three cues that the brain uses to locate a sound - for each earphone. The simplest to understand is time delay. A sound to the right of you will arrive at your left ear a fraction of a second later than it will your right ear.

Because we all have distinct morphologies, a spatial filter needs to be personalized to effectively fool our brains. At present, the only way to get an accurate spatial filter is to use an array of loudspeakers and two microphones placed in each ear. This equipment is expensive, and the process can take a couple of hours.

Some military pilots have had their spatial filters measured for binaural sound, allowing for the employment of a 3D warning system, which, Tew explained, can quickly draw a pilot's attention to possible danger.

But to make spatial filters more commercially available, Tew's team has eliminated the audio measurements. Instead, they have figured a way to generate a spatial filter from a few hundred numbers that represent the physical features of a person's head.

These "head numbers" can be gleaned from visual images taken by a stereo camera. One complication is that a visual image cannot capture the folds in the ear, nor can it see past the hair to measure the scalp.

"We are optimistic that we can guess at the missing bits," Tew said.

Practical use

Besides immersing people in a virtual aural environment, spatial filters could be used to improve hearing aids, which currently do not take into account the effects of a person's ear and head shape.

"We should be able to tailor them for an individual," Tew said.

By increasing the amount of directional information, a hearing aid user should have an easier time focusing on one sound, while ignoring others.

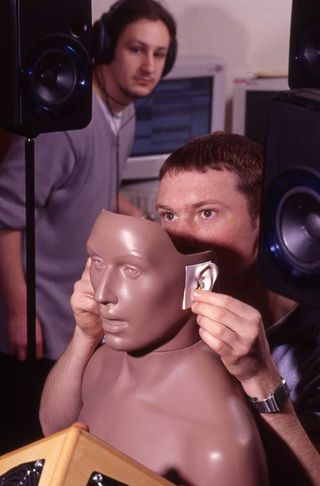

Tew's group plan to refine their mathematical transformation on 50 or so subjects. Currently, they have been working with a special mannequin called the Knowles Electronics Mannequin for Acoustic Research, or KEMAR for short.

"In doing these measurements, sitting still is a big issue - KEMAR is just great for that," Tew said.

Most Popular